The Automatic Run Processor (or ARP, for short, because I want that to catch on) is a web service that allows for the easier submission of batch jobs via a web interface. A script that submits the batch job (to allow for more customization in this command) is all that is needed for this system to work.

1) Webpage

To use this system, choose an experiment from https://pswww.slac.stanford.edu/lgbk/lgbk/experiments.

1.1) Workflow Definitions

Under the Workflow dropdown, select Definitions. The Workflow Definitions tab is the location where scripts are defined; scripts are registered with a unique name. Any number of scripts can be registered. To register a new script, please click on the + button on the top right hand corner.

1.1.1) Name/Hash

A unique name given to a registered script; the same script can be registered under different names with different parameters.

1.1.2) Executable

The absolute path to the batch script. An example can be seen here. This script must contain the batch job submission command (bsub/sbatch) since it gives the user the ability to customize the the batch submission. Overall, it can act as a wrapper for the code that will do the analysis on the data along with submitting the job.

1.1.3) Parameters

The parameters that will be passed to the executable. Any number of name value pairs can be associated with a job definition. These are then made available to the above executable as environment variables. For example, in the above example, one can obtain the value of the parameter LOOP_COUNT (100) in a bash script by using ${LOOP_COUNT}. In addition, the following additional environment variables are made available to the script

- EXPERIMENT - The name of the experiment; in the example shown above, diadaq13.

- RUN_NUM - The run number for the job.

JID_UPDATE_COUNTERS - This is a URL that can be used to update the progress of the job. These updates are also automatically reflected in the UI.

1.1.4) Location

This defines where the analysis is done. While many experiments prefer to use the psana cluster (SLAC) to perform their analysis, some experiments prefer to use HPC facilities like NERSC to perform their analysis.

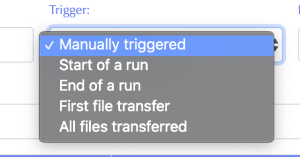

1.1.4) Trigger

This defines the event that in the data management system that kicks off the job submission.

- Manually triggered - The user will manually trigger the job in the Workflow Control tab.

- Start of a run - When the DAQ starts a new run.

- End of a run - When the DAQ closes a run.

- First file transfer - When the data movers indicate that the first registered file for a run has been transferred and is available at the job location.

- All files transferred - When the data movers indicate that all registered files for a run have been transferred and are available at the job location.

1.1.5) As user

The job will be executed as this user.

1.1.6) Edit/delete job

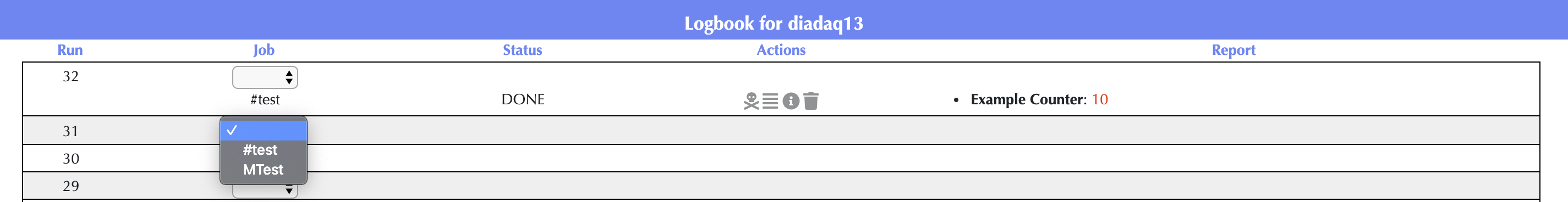

1.2) Workflow Control

Under the Workflow dropdown, select Control to create and check the status of your analysis jobs. The Control tab is where job definitions defined in the Definitions tab may be applied to experiment runs. An entry is automatically created for jobs that are triggered automatically. To manually trigger a job, in the drop-down menu of the Job column, select the job. A job can be triggered any number of times; each execution has a separate row in the UI.

1.2.1) Status

These are the different statuses that a job can have -

- START - Pending submission to the HPC workload management infrastructure.

- SUBMITTED - The job has been submitted to the HPC workload management infrastructure. A job may stay in the SUBMITTED for some time depending on how busy the queues are.

- RUNNING - The job is currently running. One can get job details and the log file. The job can also potentially be killed.

- EXITED - The job has finished unsuccessfully. The log files may have some additional information.

- DONE - The job has finished successfully. The job details and log files may be available; most HPC workload management systems delete this information after some time.

1.2.2) Actions

There are four different actions which can be applied to a script. They do the following if pressed:

- Attempt to kill the job. A green success statement will appear near the top-right of the page if the job is killed successfully and a red failure statement will appear if the job is not killed successfully.

- Returns the log file for the job. If there is no log file or if no log file could be found, it will return blank.

- Returns details for the current job by invoking the the appropriate job details command in the HPC workload management infrastructure.

- Delete the job execution from the run. Note: this does not kill the job, it only removes it from the webpage.

1.2.3) Report

This is a customizable column which can be used by the script executable to report progress. The script executable reports progress by posting JSON to a URL that is available as the environment variable JID_UPDATE_COUNTERS.

For example, to update the status of the job using bash, one can use

curl -s -XPOST ${JID_UPDATE_COUNTERS} -H "Content-Type: application/json" -d '[ {"key": "<b>LoopCount</b>", "value": "'"${i}"'" } ]'

In Python, one can use

import os

import requests

requests.post(os.environ["JID_UPDATE_COUNTERS"], json=[ {"key": "<b>LoopCount</b>", "value": "75" } ])

2) Hash Script

The following example scripts live at /reg/g/psdm/web/ws/test/apps/release/logbk_batch_client/test/submit.sh and /reg/g/psdm/web/ws/test/apps/release/logbk_batch_client/test/submit.py.

2.1) submit.sh

The script that the hash corresponds to is the one that submits the job via the bsub command. This script is shown below.

#!/bin/bash ABS_PATH=/reg/g/psdm/web/ws/test/apps/logbk_batch_client/test bsub -q psdebugq -o $ABS_PATH/logs/%J.log python $ABS_PATH/submit.py "$@"

This script will run the batch job on psdebugq and store the log files in /reg/g/psdm/web/ws/test/apps/release/logbk_batch_client/test/logs/<lsf_id>. Also, it will pass all arguments passed to it to the python script, submit.py (these would be the parameters entered in the Batch defs tab).

2.2) submit.py

The Python script is the code that will do analysis and whatever is necessary on the run data. Since this is just an example, the Python script, submit.py, doesn't get that involved. It is shown below.

from time import sleep

from requests import post

from sys import argv

from os import environ

from numpy import random

from string import ascii_uppercase

print 'This is a test function for the batch submitting.\n'

## Fetch the URL to POST to

update_url = environ.get('BATCH_UPDATE_URL')

print 'The update_url is:', update_url, '\n'

## Fetch the passed arguments as passed by submit.sh

params = argv

print 'The parameters passed are:'

for n, param in enumerate(params):

print 'Param %d:' % (n + 1), param

print '\n'

## Run a loop, sleep a second, then POST

for i in range(10):

sleep(1)

rand_char = random.choice(list(ascii_uppercase))

print 'Step: %d, %s' % (i + 1, rand_char)

post(update_url, json={'counters' : {'Example Counter' : [i + 1, 'red'],

'Random Char' : rand_char}})

2.3) Log File Output

The print statements print out to the run's log file. The output of submit.py is below. The first parameter is the path to the Python script, the second is the experiment name, the third is the run number and the rest are the parameters passed to the script.

This is a test function for the batch submitting. The update_url is: http://psanaphi110:9843//ws/logbook/client_status/450 The parameters passed are: Param 1: /reg/g/psdm/web/ws/test/apps/logbk_batch_client/test/submit.py Param 2: xppi0915 Param 3: 134261 Param 4: param1 Param 5: param2 Step: 1, R Step: 2, J Step: 3, T Step: 4, P Step: 5, S Step: 6, B Step: 7, E Step: 8, K Step: 9, X Step: 10, V

3.0 Frequently Asked Questions (FAQ)

Is it possible to submit more than one job per run?

- Yes, each run can accept multiple hashtags.

Can a submitted job submit other subjobs?

- Yes, in a standard LSF fashion, BUT the ARP will not know about the subjobs. Only jobs submitted through the ARP webpage are known to the ARP.

When using the 'kill' option, how does ARP know which jobs to kill?

- The ARP keeps track of the hashtags for each run and the associated LSF jobid. That information allows the ARP to kill jobs.

As far as I understand there is a json entry for each line which stores info, can one access this json entry somehow?

- The JSON values are displayed in the ARP webpage automatically. To access them programmatically, use the kerberos endpoint

ws-kerb/batch_manager/ws/logbook/batches_status/<experiment_id>.

For example, this gives the batch processing status for experiment id 302import requests from krtc import KerberosTicket from urllib.parse import urlparse ws_url = "https://pswww.slac.stanford.edu/ws-kerb/batch_manager/ws/logbook/batches_status/302" krbheaders = KerberosTicket("HTTP@" + urlparse(ws_url).hostname).getAuthHeaders() r = requests.get(ws_url, headers=krbheaders) print(r.json())