Access to data and SLURM partitions

The FFB system is designed to provide dedicated analysis capabilities during the experiment. After one week from the end of the experiment, the data files will be deleted from FFB and will be available only on one of the offline systems (psana, SDF, or NERSC).

Note 1: The FFB currently offers the fastest file system (WekaIO on NVME disks via IB HDR100) of all LCLS storage systems, but it's limited in size to just a bit more than 400TB: LCLS maybe be forced to remove data from FFB before the nominal week if the running experiments are particularly data intensive.

Note 2: The raw data are copied to the offline storage system and to tape immediately, i.e. in quasi real time during the experiment, not after they have been deleted from FFB. The users generated data created in the scratch/ folder are moved to the offline system when the experiment is deleted from the FFB described in Lifetime of data on the FFB.

Note 3: For the time being, the new FFB system will be available only for FEH experiments. NEH experiments will still rely on psana resources.

You can access the FFB system from pslogin, psdev or psnx with:

% ssh psffb

The experiment data will be available under:

/cds/data/drpsrcf/<instrument>/<experiment>

There are two options for telling psana which directory the data is in. One can add the "dir=" keyword to the psana DataSource, like this:

dsource = DataSource('exp=cxilu9218:run=20:smd:dir=/cds/data/drpsrcf/cxi/cxilu9218/xtc')

or one can set the following environment variable:

export SIT_PSDM_DATA=/cds/data/drpsrcf

The experiment folder names are the same as what you would expect in the offline and are described in the data retention policy.

You can submit your fast feedback analysis jobs to one of the queues shown in the following table. The goal is to assign dedicated resources to up to three experiments for each shift. Please contact your POC to get one of the high priority queues, 1, 2 or 3, assigned to your experiment.

Queue | Comments | Throughput | Nodes | Cores/ | RAM [GB/node] | Default |

|---|---|---|---|---|---|---|

| anaq | For the week after the experiment | 100 | 82 | 61 | 128 | 12hrs |

| ffbl1q | Off-shift queue for experiment 1 | 100 | 8 | 61 | 128 | 12hrs |

| ffbl2q | Off-shift queue for experiment 2 | 100 | 18 | 61 | 128 | 12hrs |

ffbl3q | Off-shift queue for experiment 3 | 100 | 56 | 61 | 128 | 12hrs |

ffbh1q | On-shift queue for experiment 1 | 100 | 8 | 61 | 128 | 12hrs |

ffbh2q | On-shift queue for experiment 2 | 100 | 18 | 61 | 128 | 12hrs |

ffbh3q | On-shift queue for experiment 3 | 100 | 56 | 61 | 128 | 12hrs |

Note that jobs submitted to ffbl<n>q will preempt jobs submitted to anaq and jobs submitted to ffbh<n>q will preempt jobs submitted to ffbl<n>q and anaq. Jobs that are preempted to make resources available to higher priority queues are paused and then are automatically resumed when resources become available.

The FFB system uses SLURM for submitting jobs - information on using SLURM can be found on the Submitting SLURM Batch Jobs page.

FFB File Permissions

The same permission, based on ACLs, as used for the Lustre analysis file-systems are used for the FFB. However, there is an issue with the current version of the file system:

- the umask is applied when creating files and directories which violates the ACL specs. As the default umask is 022 the group write permission will be removed. We recommend to set ones umask to:

% umask 0002

Lifetime of data on the FFB

This section is In progress

xtc folder

- xtc files are immediately copied to the offline filesystem

- the lifetime on the ffb is dictated by how much data is generated

- typically files stay on the ffb during the run-time of an experiment

- however if space is need files from previous shifts might get purged

- after an experiment is done the ffb should not be used anymore except if discussed with the POC

scratch folder

Once an experiment has been complete and all xtc files have been removed the ffb scratch folder is moved to the experiments scratch folder on the ana-filesystems. The following rules are applied:

- scratch folder is made non accessible by the experiment

- files and directories below the ffb scratch/ are moved to the scratch/ffb/ on the offline filesystem: /reg/d/psdm/<instr>/<expt>/scratch/ffb/ except for hdf5 files in the smalldata folder (see next).

- hdf5 files below scratch/hdf5/smalldata/ are moved the the hdf5/smalldata/ folder on the offline filesystem, e.g.

/cds/data/drpsrcf/mfx/mfx123456/scratch/smalldata/*.h5 -> /cds/data/psdm/psdm/mfx/mfx123456/hdf5/smalldata/

(only .h5 files are moved to the hdf5/smalldata other files will be moved below scratch/ffb/

Access to Lustre ana-filesystems

The batch nodes also have access to the ana Luster filesystems. For example the calib folder /cds/data/drpsrcf/<instrument>/<experiment>/calib is a link to the folder on the ana filesystem.

The bandwidth to the ana-filesystems is very limited and must be used for only light load e.g.: reading calibration constants.

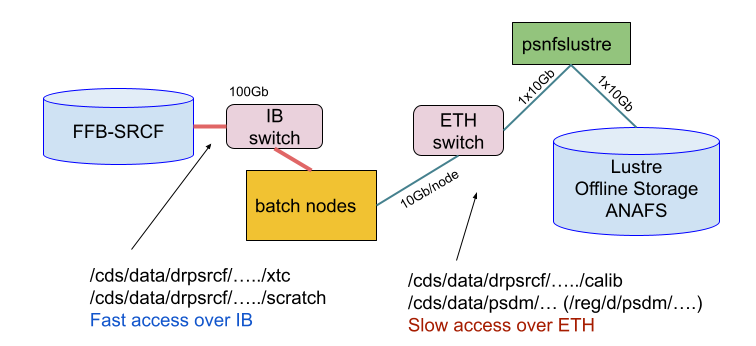

The following figure shows the connectivity of the nodes:

- Each FFB file server (16 of them) has a 100Gb/s IB connection

- Each batch node has a 100Gb/s IB connection

- Batch nodes have either a 10Gb/s or a 1Gb/s Ethernet connection

- All Ethernet Luster access goes eventually through psnfslustre02 and is limited 10Gb/s

- The figure also shows which network is used for the different file path