Page History

...

The S3DF (SLAC Shared Science Data Facility) is a new SLAC-wide computing facility. The S3DF will replace is replacing the current PCDS current computer and storage resources used for the LCLS experiment data processing. Since August 2023 the data for new experiments are only available in the the S3DF (and FFB during data collection).

A simplified layout of the DRP and S3DF storage and compute nodes.

S3DF main page: https://s3df.slac.stanford.edu/public/doc/#/

S3DF accounts&access: https://s3df.slac.stanford.edu/public/doc/#/accounts-and-access(your unix account will need to be activated on the S3DF system)

| Warning |

|---|

|

...

| /sdf/group/lcls/ds/ana | psana1/psana2 release, detector calibration,.. |

| /sdf/group/lcls/ds/tools | smalldata-tools, cctbx, crystfel, om, .... |

| /sdf/group/lcls/ds/dm | data-management releases and tools. |

...

Experimental Data

The experiments analysis storage and fast-feed-back storage is accessible on the interactive and batch nodes.

...

| Warning |

|---|

So far data are only copied to the S3DF on request and run restores are to the PCDS Lustre file systems. |

Scratch

A scratch folder is provided for every experiment and is accessible using the canonical path

/sdf/data/lcls/ds/<instr>/<expt>/<expt-folders>/scratch

The scratch folder lives on a dedicated high performance file system and the above path is a link to this file system.

In addition to the experiment scratch folder each users has its own scratch folder:

/sdf/scratch/users/<first character of name>/<user-name> (e.g. /sdf/scratch/users/w/wilko)

The users scratch space is limited to 100GB and as the experiment scratch folder old files will be automatically removed when the scratch file system is filling up. The df command will show the usage of a users scratch folder.

Large User Software And Conda Environments

If you have a large software package that needs to be installed in s3df contact pcds-datamgt-l@slac.stanford.edu. We can create a directory for you under /sdf/group/lcls/ds/tools.

A directory has been created under /sdf/group/lcls/ds/tools/conda_envs where users can create large conda environments (see Installing Your Own Python Packages for instructions).

S3DF Documentation

S3DF-maintained facility documentation can be found here.

...

To access data and submit to Slurm use the interactive cluster (ssh psana).

psana1 (LCLS-I data: XPP, XCS, MFX, CXI, MEC)

| No Format |

|---|

% source /sdf/group/lcls/ds/ana/sw/conda1/manage/bin/psconda.sh [-py2] |

The command above activates by default the most recent python 3 version of psana. New psana versions do not support python 2 anymore, but it still possible to activate the last available python 2 environment (ana-4.0.45) using the -py2 option.

psana2 (LCLS-II data: TMO, TXI, RIX)

| No Format |

|---|

% source /sdf/group/lcls/ds/ana/sw/conda2/manage/bin/psconda.sh |

Other LCLS Conda Environments in S3DF

- There is a simpler environment used for analyzing hdf5 files. Use "source /sdf/group/lcls/ds/ana/sw/conda2/manage/bin/h5ana.sh" to access it. It is also available in jupyter.

Batch processing

The S3DF batch compute link describes the Slurm batch processing. Here we will give a short summary relevant for LCLS users.

a partition and slurm account should be specified when submitting jobs. The slurm account would be lcls:<experiment-name> e.g. lcls:xpp123456. The account is used for keeping track of resource usage per experiment

Code Block % sbatch -p milano --account lcls:xpp1234 ........You must be a member of the experiment's slurm account which you can check with a command like this:

Code Block sacctmgr list associations -p account=lcls:xpp1234- Submitting jobs using the lcls account allows only to submit preemptable jobs and requires to specify: --qos preemptable . The lcls account is set by --account lcls or --account lcls:default (the lcls name gets automatically translated to lcls:default by slurm, and s-commands will show the default one).

- In the S3DF by default memory is limited to 4GB/core. Usually that is not an issue as processing jobs use many core (e.g. a job with 64 cores would request 256GB memory)

- the memory limit will be enforced and your job will fail with an OUT_OF_MEMORY status

- memory can be increased using the--memsbatch option (e.g.: --mem 16G, default unit is megabytes)

- Default total run time is 1 day, the --time option allows to increase/decrease it.

Number of cores

Warning Some cores of a milano batch node are exclusively used for file IO (WekaFS). Therefore although a milano node has 128 core only 120 can be used.

submitting a task with --nodes 1 --ntasks-per-node=128 would fail with: Requested node configuration is not availableEnvironment Varibales: sbatch option can also be set via environment variables which is useful if a program is executed that calls sbatch and doesn't allow to set options on the command line e.g.:

Code Block language bash % SLURM_ACCOUNT=lcls:experiment executable-to-run [args] or % export SLURM_ACCOUNT=lcls:experiment % executable-to-run [args]

The environment variables are SBATCH_MEM_PER_NODE (--mem), SLURM_ACCOUNT(--account) and SBATCH_TIMELIMIT (--time). The order arguments are selected is: command line, environment and withing sbatch script.

Real-time Analysis Using A Reservation For A Running Experiment

Currently we are reserving nodes for experiments that need real-time processing. This is an example of parameters that should be added to a slurm submission script:

| Code Block |

|---|

#SBATCH --reservation=lcls:onshift

#SBATCH --account=lcls:tmoc00221 |

You must be a member of the experiment's slurm account which you can check with a command like this:

| Code Block |

|---|

sacctmgr list associations -p account=lcls:tmoc00221 |

and your experiment must have been added to the reservation permissions list. This can be checked with this command:

| Code Block |

|---|

(ps-4.6.1) scontrol show res lcls:onshift

ReservationName=lcls:onshift StartTime=2023-08-02T10:39:06 EndTime=2023-12-31T00:00:00 Duration=150-14:20:54

Nodes=sdfmilan[001,014,022,033,047,059,062,101,127,221-222,226] NodeCnt=12 CoreCnt=1536 Features=(null) PartitionName=milano Flags=IGNORE_JOBS

TRES=cpu=1536

Users=(null) Groups=(null) Accounts=lcls:tmoc00221,lcls:xppl1001021,lcls:cxil1022721,lcls:mecl1011021,lcls:xcsl1004621,lcls:mfxx1004121,lcls:mfxp1001121 Licenses=(null) State=ACTIVE BurstBuffer=(null) Watts=n/a

MaxStartDelay=(null)

(ps-4.6.1)

|

You can use the reservation only if your experiment is on-shift or off-shift. If you think slurm settings are incorrect for your experiment email pcds-datamgt-l@slac.stanford.edu.

MPI and Slurm

For running mpi jobs on the S3DF slurm cluster mpirun (or related tools) should be used. Using srun to run mpi4py will fail as it requires pmix which is not supported by the Slurm version. Example psana MPI submission scripts are here: Submitting SLURM Batch Jobs

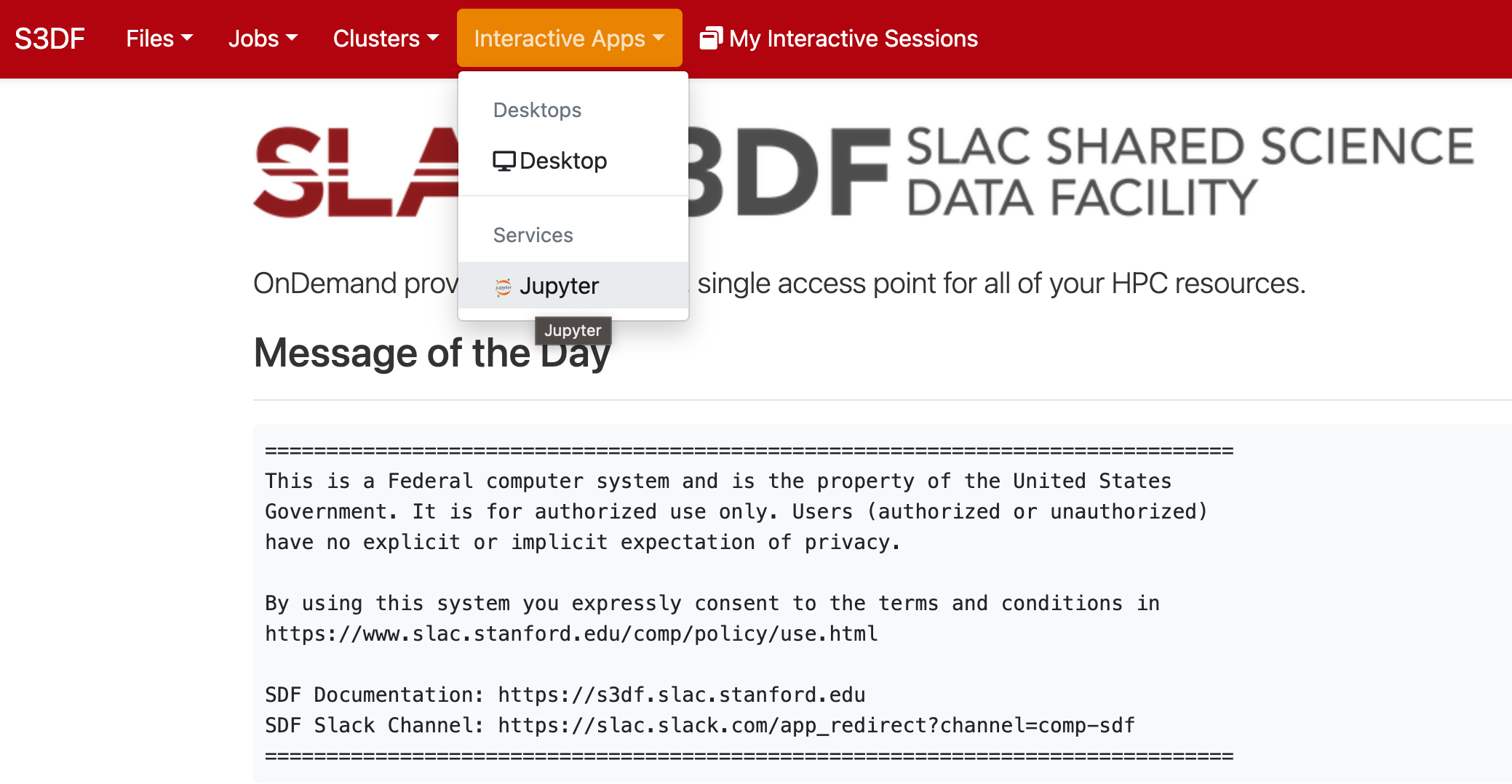

Jupyter

Jupyter is provided by the onDemand service (We are not planning to run a standalone jupyterhub as is done at PCDS. For more information check the S3DF interactive compute docs).

| Info |

|---|

Is it imperative that you ssh to the s3df at least once so that your home is set up before trying to access Jupyter. To do so, run ssh <username>@s3dflogin.slac.stanford.edu from a terminal. |

| Warning |

|---|

onDemand requires a valid ssh-key (~/.ssh/s3df/id_rsaed25519) which are currently not auto generated when an account is enabled in the S3DF. If you don't have a key run However, older accounts might not have this key but can create it running the following command on a login or interactive node: /sdf/group/lcls/ds/dm/bin/generate-keys.sh If you still have issues logging, go to https://vouch.slac.stanford.edu/logout and try loging in again. |

Please note that the "Files" application cannot read ACLs which means that (most likely) you will not be able to access your experiment directories from there. Jupyterlab (or interactive terminal session) from the psana nodes will not have this problem.

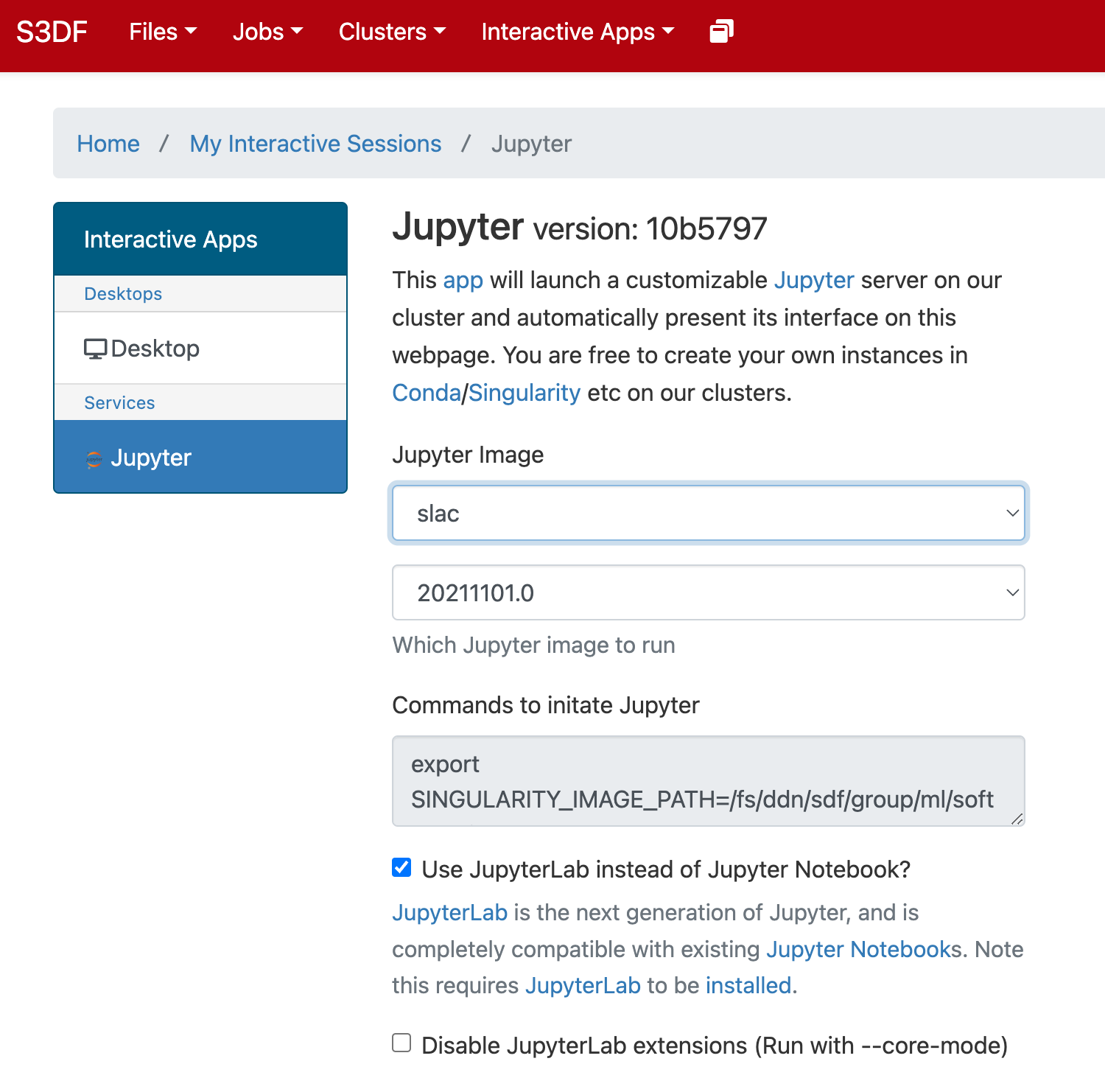

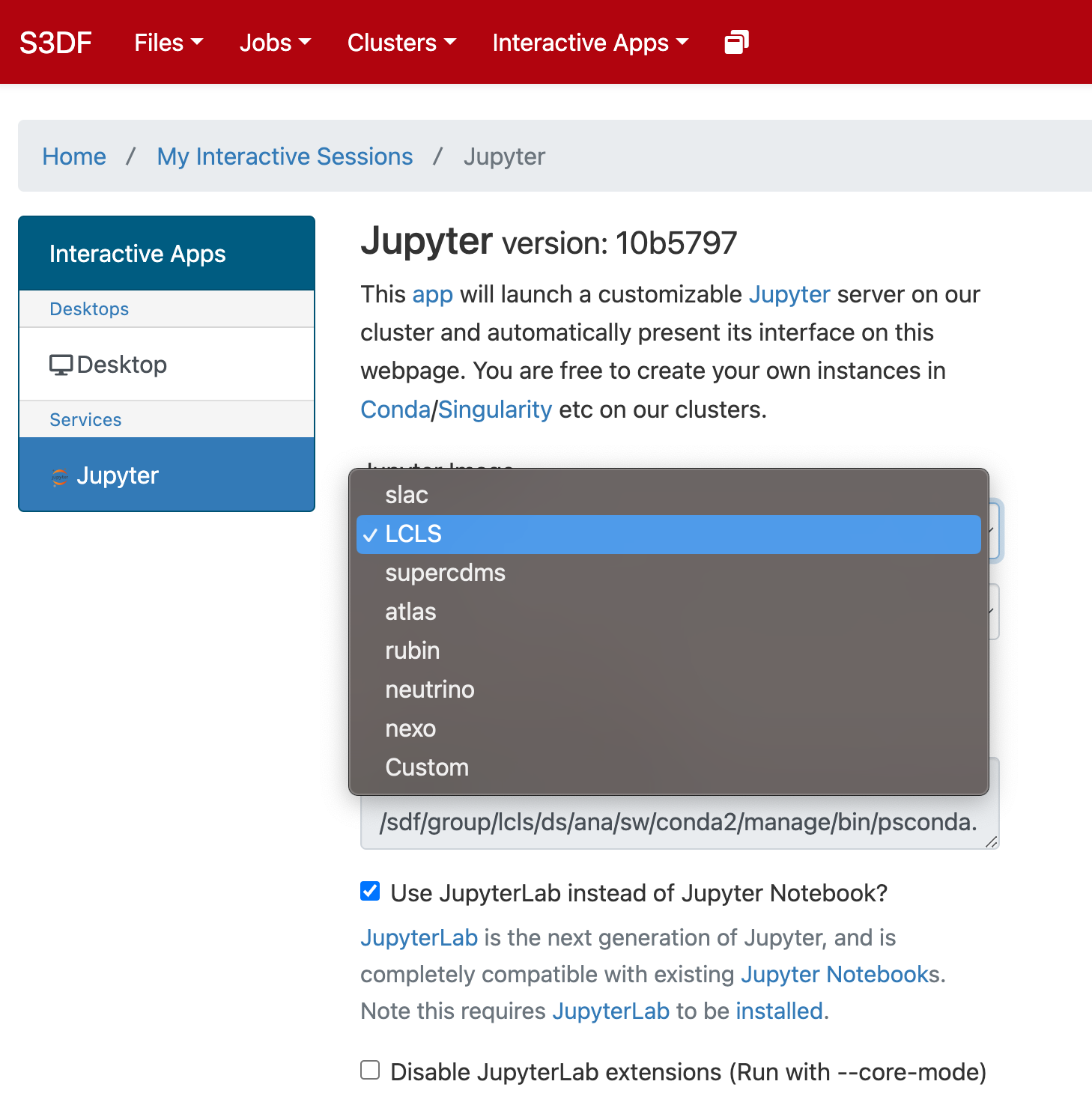

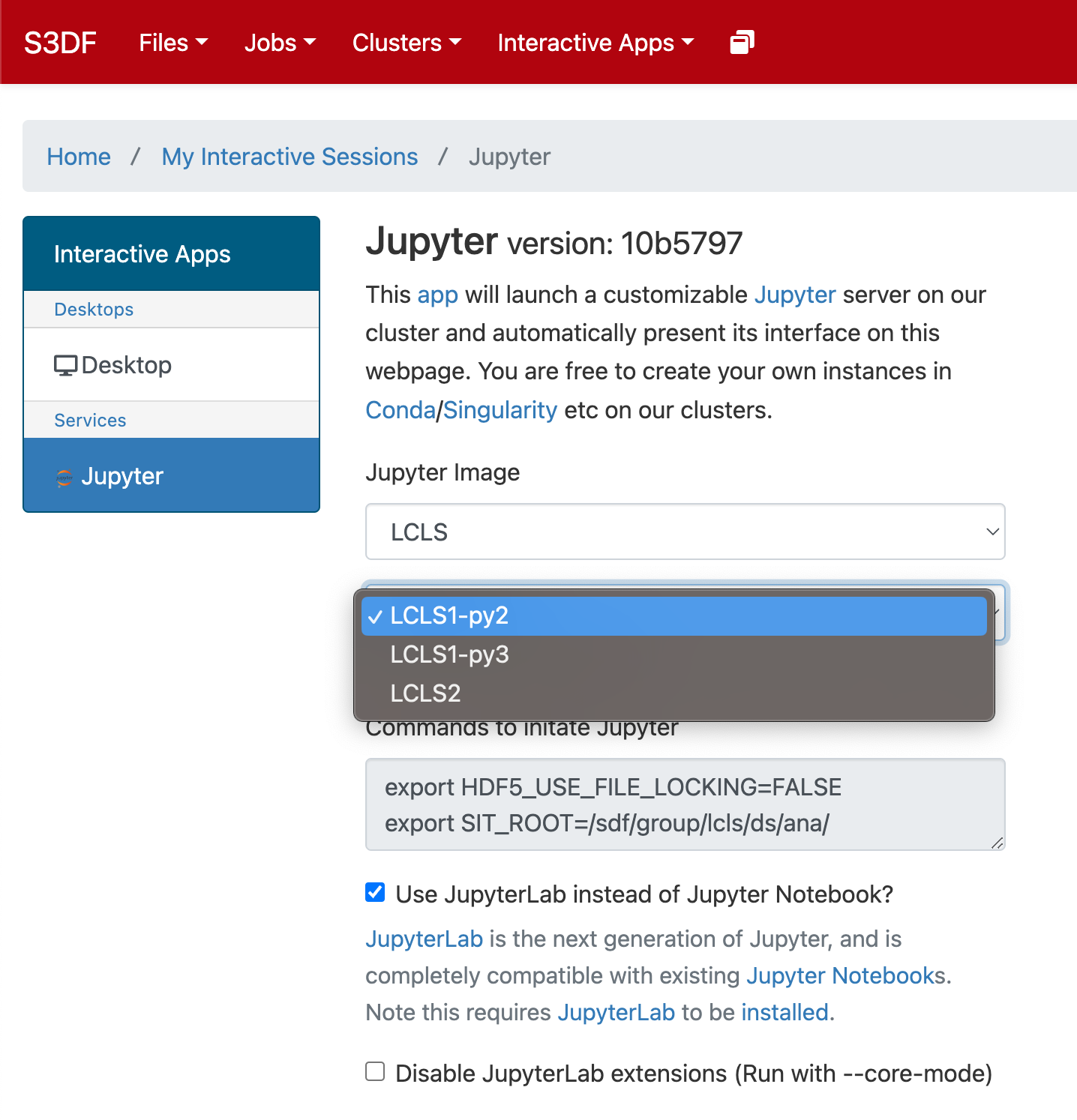

In the "Interactive Apps" select Jupyter, this opens the following form

...

The page will at first show a "Starting" status, after some time it will switch to "Running" and the "Connect to Jupyter" button will be available. Click on the "Connect to Jupyter" button to start working on Jupyter. The Session ID link instead allows to open the directory where the Jupyter is run and access to the files, included the logs.

Offsite data transfers

The S3DF provides a group of transfer nodes for moving data in/out. The nodes are accessed using the load balanced name: s3dfdtn.slac.stanford.edu

A Globus endpoint is available:slac#s3df