Page History

...

- clean up existing space

- explore replacing disks in existing fileservers with 2 TB ones

- find a new vendor(s)

- change storage model

Log

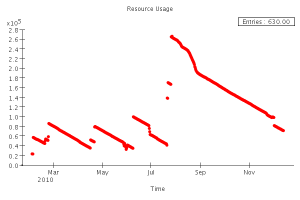

- For a snapshot of current xroot usage summary, see http://www.slac.stanford.edu/~wilko/glastmon/xrddisk.html

- 16 Dec 2010 - Performed cleanup of /glast/Scratch and P110 reprocessing directories. Available xroot space increased from ~48 TB to ~120 TB.

Clean up

We have 1.05 PB of space in xrootd now. Some 640 TB of that is taken up by L1, and 90% of that space is occupied by recon, svac and cal tuples.unmigrated-wiki-markup

...

\[added by Tom\]

For the plot ]

For the plot (12/14/10) - note that it does not include the "wasted" xrootd space per server, currently running about 21 TB.

Current xroot holdings:

Data in tables current as of 12/8/2010

...

Therefore, with current holdings and usage, there is sufficient space for 71 days of Level 1 data (running out ~17 Feb 2011)

Usage distribution:

...

:

path | size [TB] | #files | Notes ]]></ac:plain-text-body></ac:structured-macro> |

|---|---|---|---|

/glast/Data | 785 | 807914 |

|

/glast/Data/Flight | 767 | 792024 |

|

/glast/Data/Flight/Level1/ | 713 | 535635 | 670 TB registered in dataCat |

/glast/mc | 82 | 11343707 |

|

/glast/mc/ServiceChallenge/ | 56 | 6649775 |

|

/glast/Scratch | 51 | 358511 | ~50 TB recovery possible (removed 16 Dec 2010) |

/glast/Data/Flight/Reprocess/ | 50 | 226757 | ~18 TB recovery possible |

/glast/level0 | 13 | 2020329 |

|

/glast/bt | 4 | 760384 |

|

/glast/test | 2 | 2852 |

|

/glast/scratch | 1 | 51412 |

|

/glast/mvmd5 | 0 | 738 |

|

/glast/admin | 0 | 108 |

|

/glast/ASP | 0 | 19072 |

|

Detail of Reprocess directories:

...

:

path | size [GB] | #files | Notes | Removal Date]]></ac:plain-text-body></ac:structured-macro> |

|---|---|---|---|---|

/glast/Data/Flight/Reprocess/P120 | 22734 | 65557 | potential production |

|

/glast/Data/Flight/Reprocess/P110 | 14541 | 49165 | removal candidate | 16 Dec 2010 |

/glast/Data/Flight/Reprocess/P106-LEO | 8690 | 1025 |

|

|

/glast/Data/Flight/Reprocess/P90 | 3319 | 2004 | removal candidate |

|

/glast/Data/Flight/Reprocess/CREDataReprocessing | 631 | 22331 |

|

|

/glast/Data/Flight/Reprocess/P115-LEO | 533 | 1440 |

|

|

/glast/Data/Flight/Reprocess/P110-LEO | 524 | 1393 | removal candidate | 16 Dec 2010 |

/glast/Data/Flight/Reprocess/P120-LEO | 503 | 627 | potential production |

|

/glast/Data/Flight/Reprocess/P105 | 264 | 33465 | production |

|

/glast/Data/Flight/Reprocess/P107 | 212 | 4523 |

|

|

/glast/Data/Flight/Reprocess/P116 | 109 | 25122 | production |

|

/glast/Data/Flight/Reprocess/Pass7-repro | 22 | 16 | removal candidate |

|

/glast/Data/Flight/Reprocess/P100 | 8 | 20089 | removal candidate |

|

Detail of top-level Monte Carlo directory:

...

:

path | size [GB] | #files | Notes ]]></ac:plain-text-body></ac:structured-macro> |

|---|---|---|---|

/glast/mc/ServiceChallenge | 57458 | 6649775 |

|

/glast/mc/OpsSim | 24691 | 4494890 |

|

/glast/mc/DC2 | 1818 | 136847 |

|

/glast/mc/OctoberTest | 41 | 29895 |

|

/glast/mc/XrootTest | 33 | 18723 | removal candidate |

/glast/mc/Test | 14 | 13403 | removal candidate |

...

This option was received with a frown from CD. Of course, Oracle has thought of this and charges a lot for the replacement drives, which have special brackets. And then there is the manpower to replace and the shell game of moving the data around. We'd have to press a lot harder to get traction on this option.

From Lance Nakata:

It's possible to attach storage trays to existing X4540 Thors and create additional ZFS disk pools for NFS or xrootd

use. The Oracle J4500 tray is the same size and density of a Thor, though the disks in the remanufactured configs below

are only 1TB, not 2TB. The Thors would probably require one SAS PCIe card, one SAS cable, and the J4500 would need to be

located within a few meters. Implementing this might require the movement of some X4540s.

Another option is to attach the J4500 to a new server, but currently that would require additional server and Solaris

support contract purchases. Please let us know if you'd be interested in this so Teri/Diana can investigate pricing and quantity available.

Thank you.

Lance

New Vendors

Two obvious candidates are in use at the Lab now: DDN used by LCLS, and Dell el cheapo by ATLAS.

...