Page History

...

The absolute path to the batch script. An example can be seen here. This script must contain the batch job submission command (bsub/sbatch) since it gives the user the ability to customize the the batch submission. Overall, it can act as a wrapper for the code that will do the analysis on the data along with submitting the job.

...

The parameters that will be passed to the executable. They must be space-separated.Any number of name value pairs can be associated with a job definition. These are then made available to the above executable as environment variables. For example, in the above example, one can obtain the value of the parameter LOOP_COUNT (100) in a bash script by using ${LOOP_COUNT}.

1.1.4)

...

Used for experiments that are currently running. If checked, every new run that is finished (i.e. every run that finishes after the box is checked) will has that hash executed on it by the user that checks the box. If hovered over, the checkbox will show who has checked the box.

1.1.5) Delete

...

Location

This defines where the analysis is done. While many experiments prefer to use the psana cluster (SLAC) to perform their analysis, some experiments prefer to use HPC facilities like NERSC to perform their analysis.

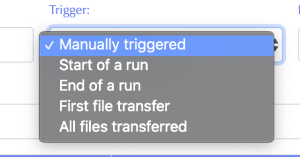

1.1.4) Trigger

This defines the event that in the data management system that kicks off the job submission.

- Manually triggered - The user will manually trigger the job in the Workflow Control tab.

- Start of a run - When the DAQ starts a new run.

- End of a run - When the DAQ closes a run.

- First file transfer - When the data movers indicate that the first registered file for a run has been transferred and is available at the job location.

- All files transferred - When the data movers indicate that all registered files for a run have been transferred and are available at the job location.

1.1.5) As user

The job will be executed as this user.

1.1.6) Edit/delete job

1.2) Batch Control

Under the Workflow tab, select Batch Processing and then select the Control tab to create and check the status of batch jobs. The Control tab is where hashes defined in the Definitions tab may be applied to experiment runs. In the drop-down menu of the Action column, any hash defined in Batch defs may be selected.

...