You are viewing an old version of this page. View the current version.

Compare with Current

View Page History

« Previous

Version 10

Next »

Compression methods supported by HDF5

HDF5 supports

gzip

file to file compression

gzip -c test.xtc > test.xtc.gz

-rw-r--r-- 1 dubrovin br 168126152 Jan 23 14:48 test.xtc

-rw-r--r-- 1 dubrovin br 88829288 Jan 23 14:51 test.xtc.gz

compression factor = 1.89, time 30sec

zlib in mempry compress/decompress single cspad image

exp=cxitut13:run=10 event 11:

Array entropy is evaluated using formula from Entropy (information_theory).

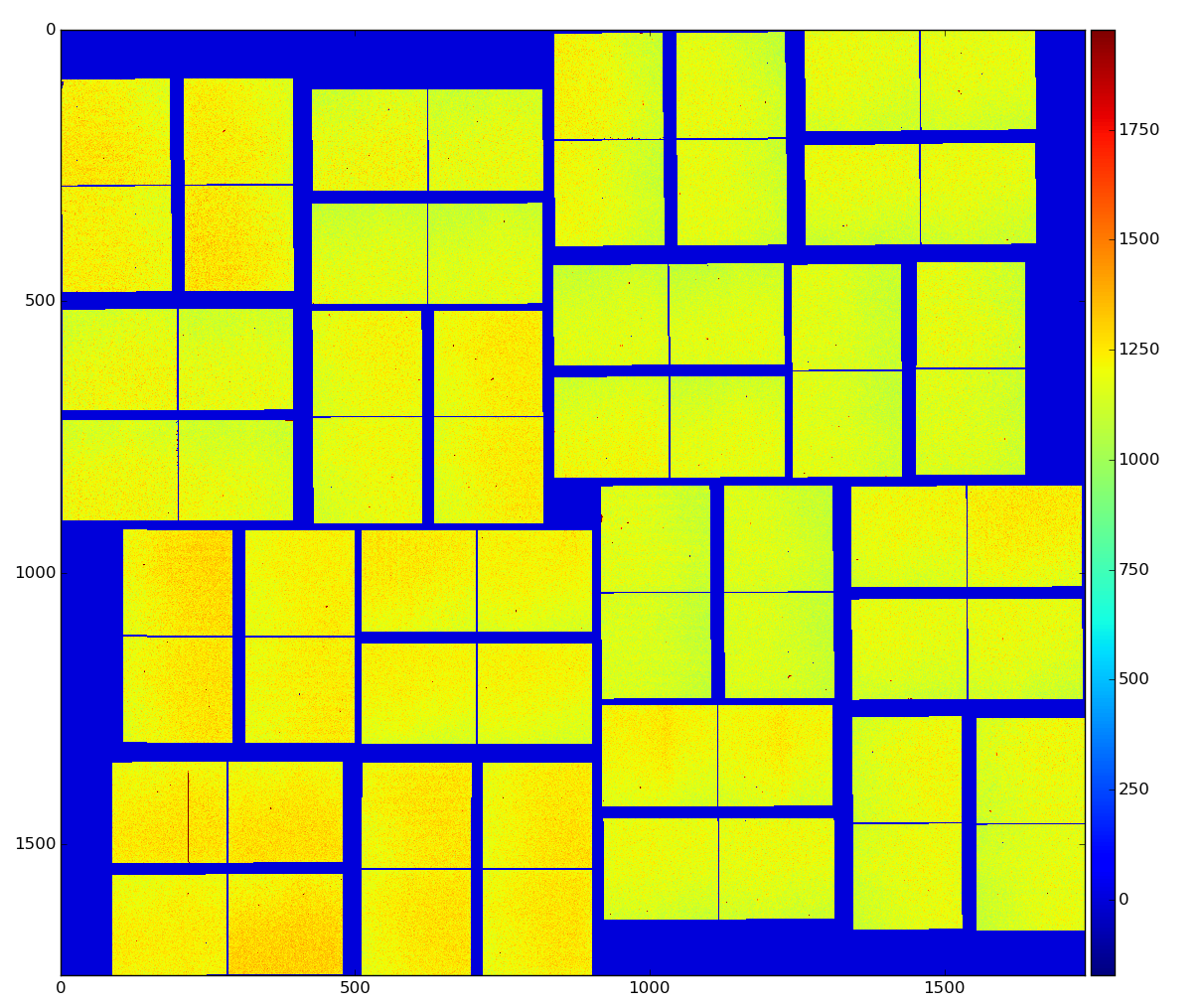

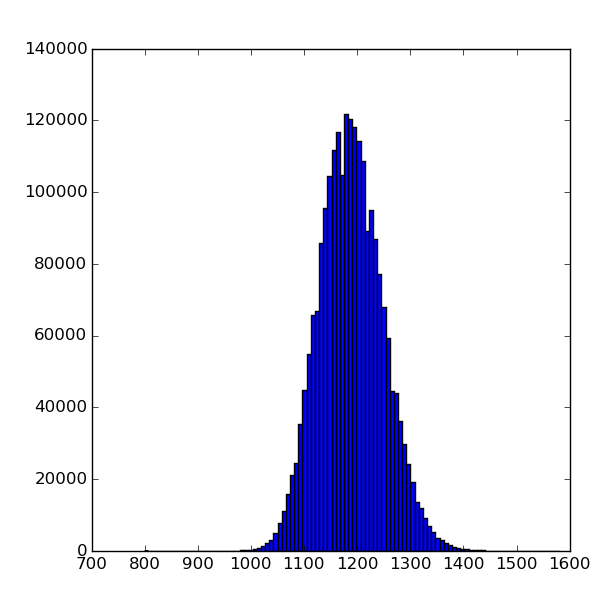

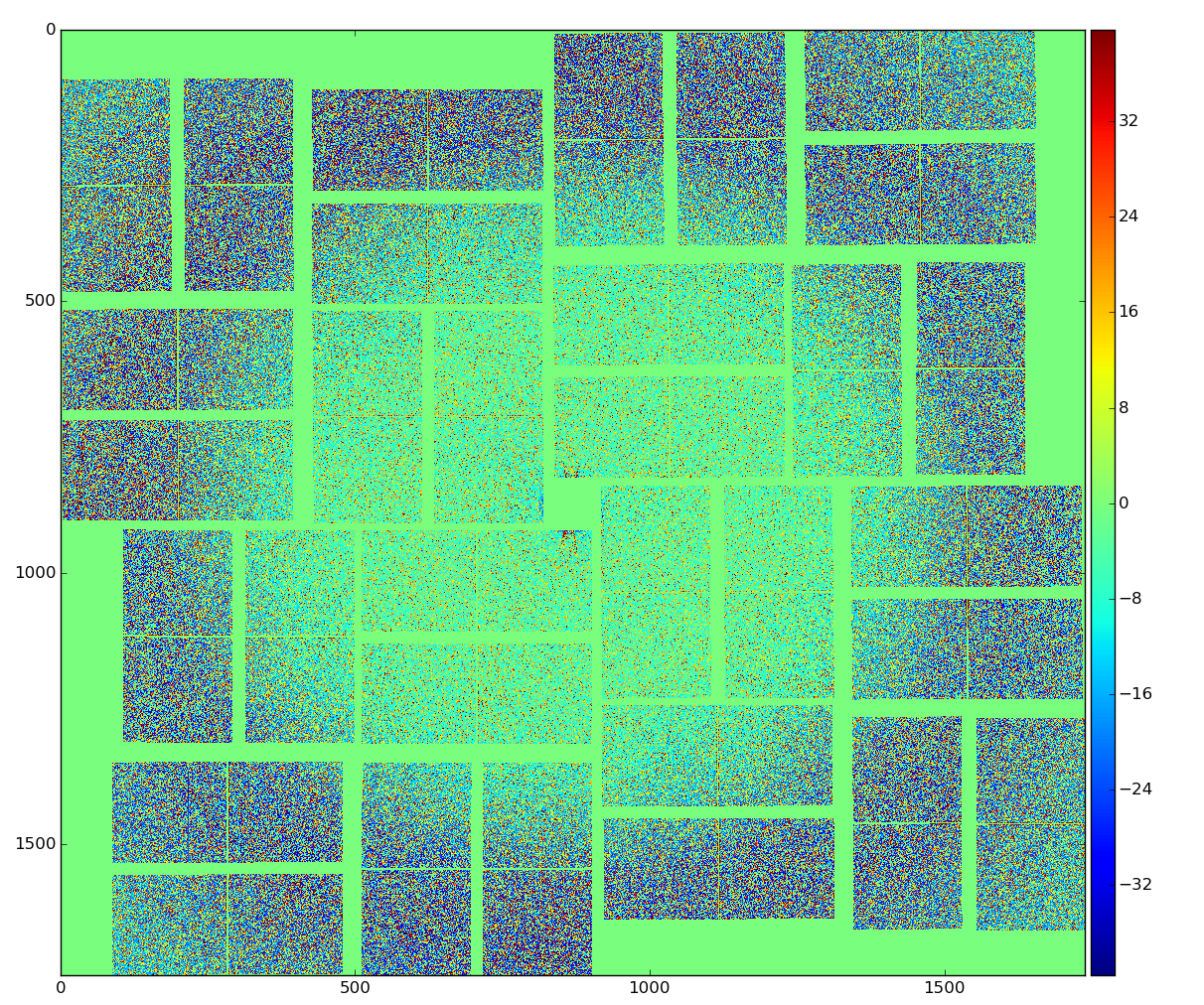

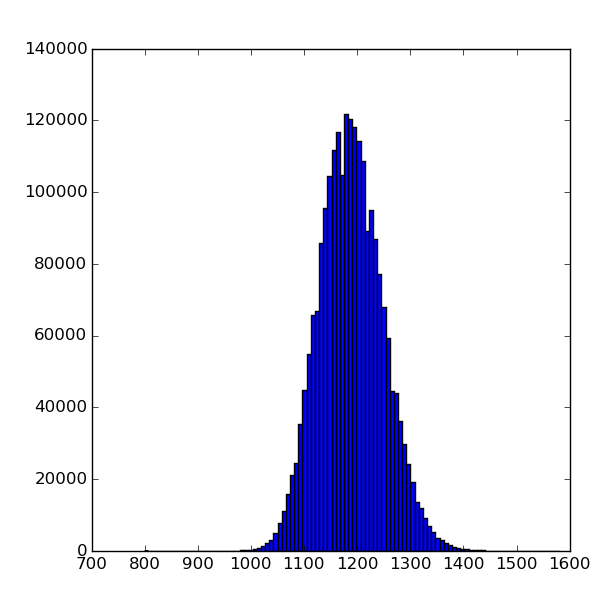

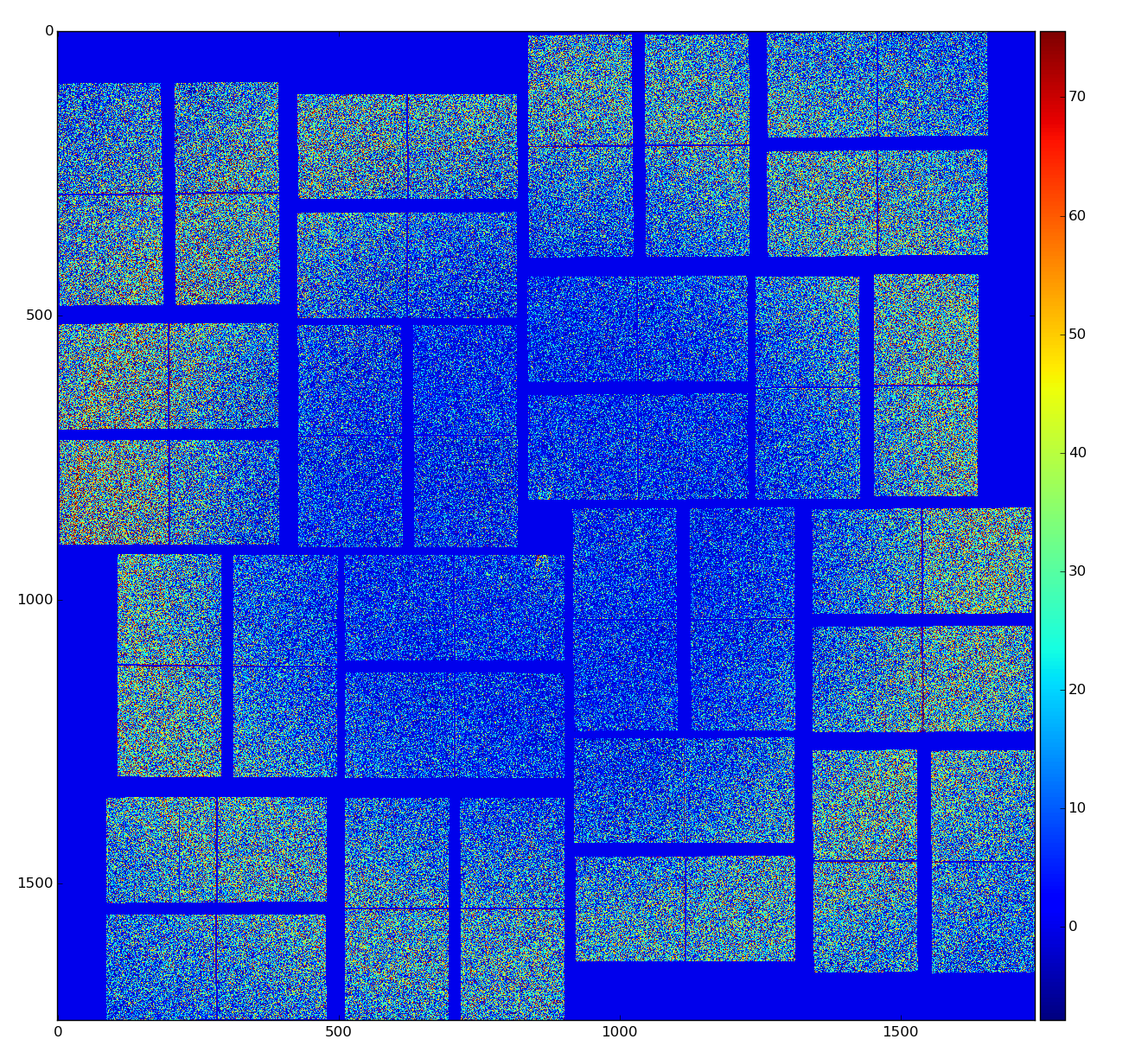

Raw data

Load data from file nda-cxitut13-r0010-e000011-raw.npy

raw data: shape:(32, 185, 388) size:2296960 dtype:int16 [1028 1082 1101 1072 1131]...

Array entropy H(16-bit) = 7.947 H(8-bit) = 5.080 H(cpo) = 7.947

zlib level=0: data size (bytes) in/out = 4593957/4594663 = 1.000 time(sec)=0.025749 t(decomp)=0.005665

zlib level=1: data size (bytes) in/out = 4593957/2922633 = 1.572 time(sec)=0.108629 t(decomp)=0.026618

zlib level=2: data size (bytes) in/out = 4593957/2908156 = 1.580 time(sec)=0.125363 t(decomp)=0.029112

zlib level=3: data size (bytes) in/out = 4593957/2884917 = 1.592 time(sec)=0.170814 t(decomp)=0.027699

zlib level=4: data size (bytes) in/out = 4593957/2886850 = 1.591 time(sec)=0.158719 t(decomp)=0.029466

zlib level=5: data size (bytes) in/out = 4593957/2885665 = 1.592 time(sec)=0.261296 t(decomp)=0.030550

zlib level=6: data size (bytes) in/out = 4593957/2834066 = 1.621 time(sec)=0.597133 t(decomp)=0.027355

zlib level=7: data size (bytes) in/out = 4593957/2828951 = 1.624 time(sec)=0.609569 t(decomp)=0.026842

zlib level=8: data size (bytes) in/out = 4593957/2828951 = 1.624 time(sec)=0.636173 t(decomp)=0.027226

zlib level=9: data size (bytes) in/out = 4593957/2828951 = 1.624 time(sec)=0.611562 t(decomp)=0.027042

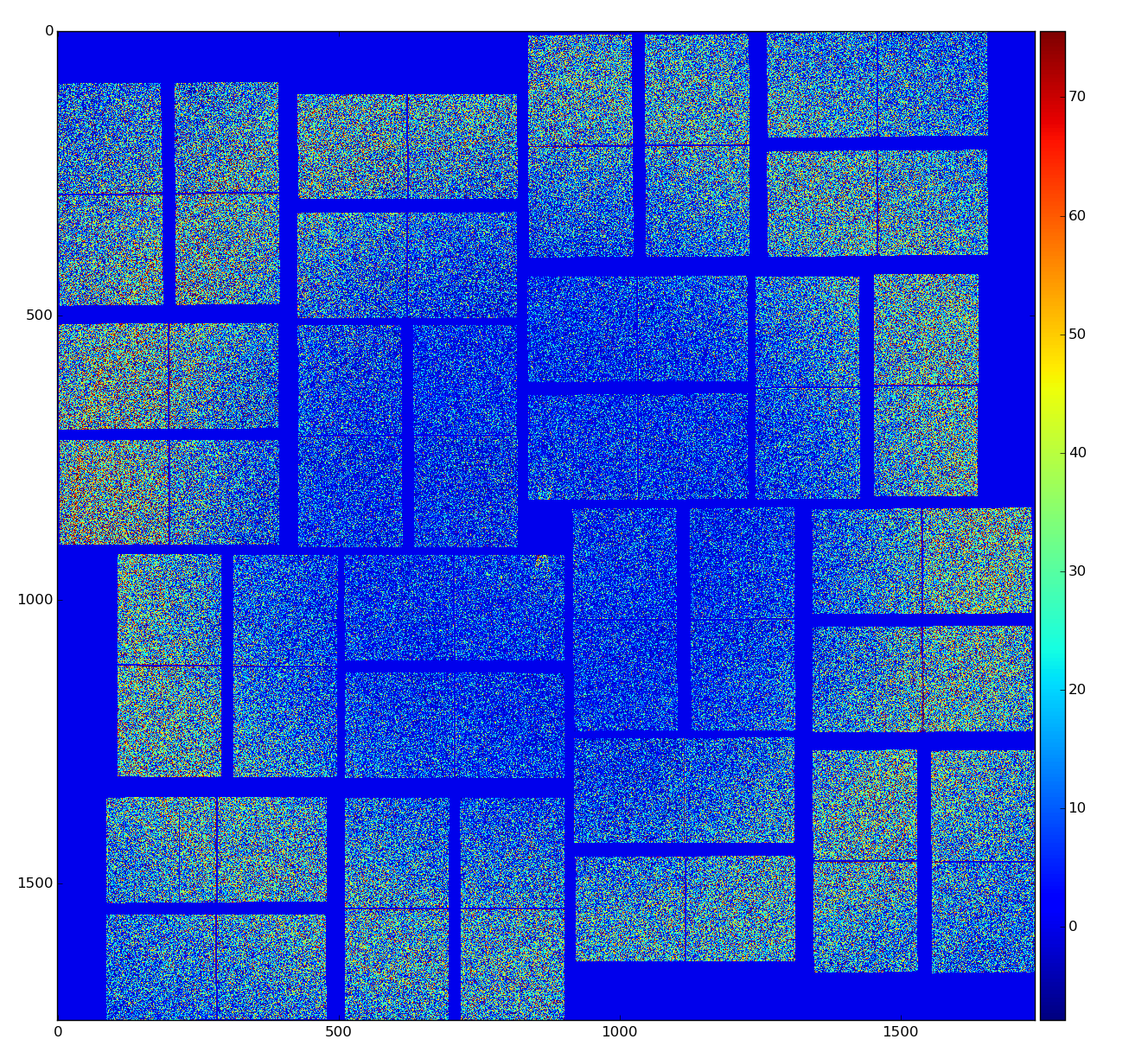

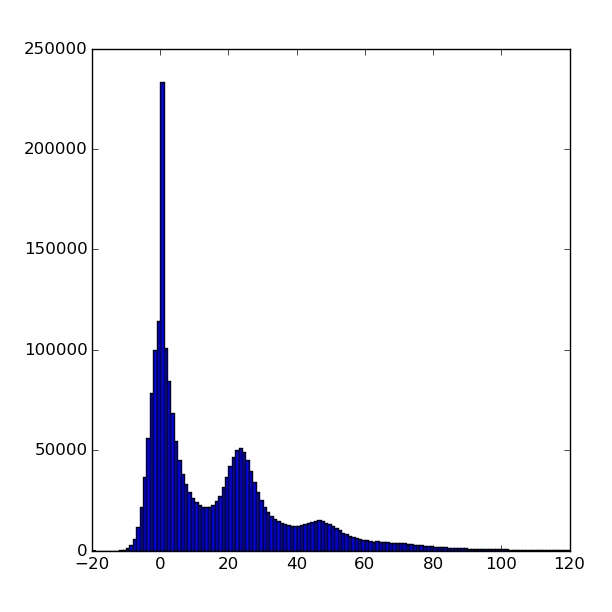

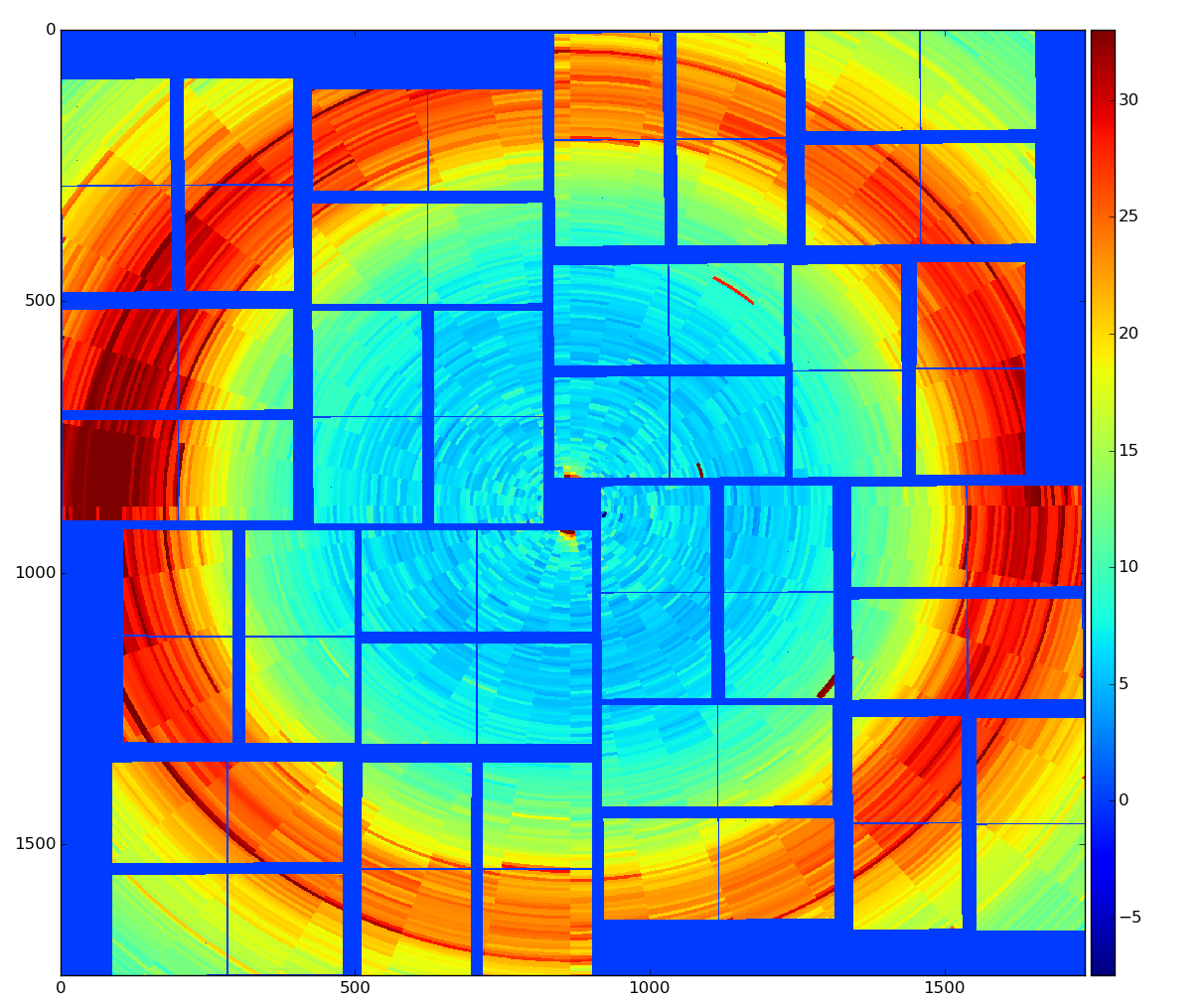

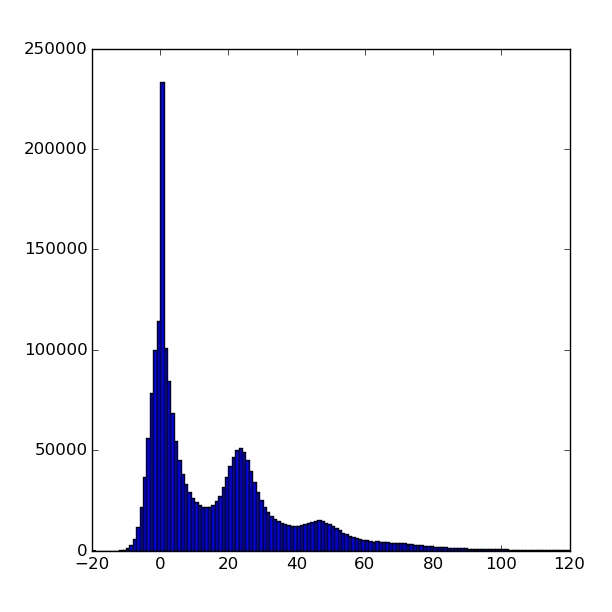

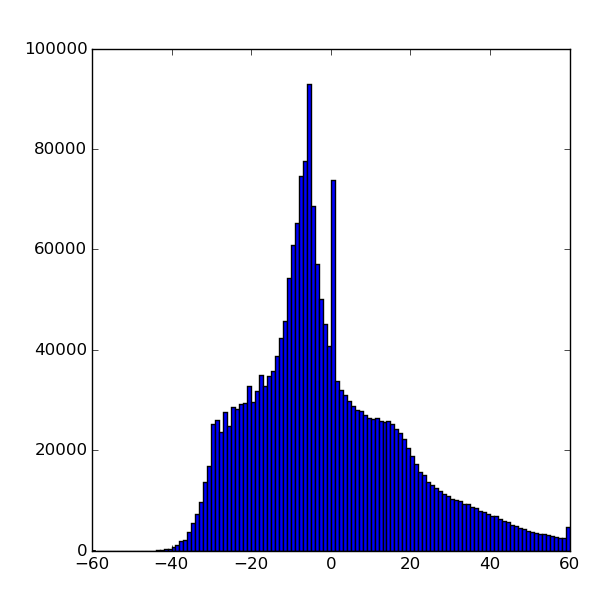

Calibrated data

calibrated data were obtained using det.calib(...) method, which essentially subtracts pedestals and apply common mode correction to raw data

Array entropy H(16-bit) = 5.844 H(8-bit) = 3.951 H(cpo) = 5.844

zlib level=0: data size (bytes) in/out = 4593957/4594663 = 1.000 time(sec)=0.249886 t(decomp)=0.176141

zlib level=1: data size (bytes) in/out = 4593957/2261808 = 2.031 time(sec)=0.085962 t(decomp)=0.020319

zlib level=2: data size (bytes) in/out = 4593957/2240202 = 2.051 time(sec)=0.101097 t(decomp)=0.019582

zlib level=3: data size (bytes) in/out = 4593957/2187212 = 2.100 time(sec)=0.152278 t(decomp)=0.048836

zlib level=4: data size (bytes) in/out = 4593957/2183572 = 2.104 time(sec)=0.142880 t(decomp)=0.049567

zlib level=5: data size (bytes) in/out = 4593957/2242648 = 2.048 time(sec)=0.308234 t(decomp)=0.022228

zlib level=6: data size (bytes) in/out = 4593957/2217193 = 2.072 time(sec)=0.677328 t(decomp)=0.020837

zlib level=7: data size (bytes) in/out = 4593957/2205357 = 2.083 time(sec)=0.975548 t(decomp)=0.023253

zlib level=8: data size (bytes) in/out = 4593957/2195009 = 2.093 time(sec)=1.581390 t(decomp)=0.023262

zlib level=9: data size (bytes) in/out = 4593957/2193889 = 2.094 time(sec)=1.802204 t(decomp)=0.020965

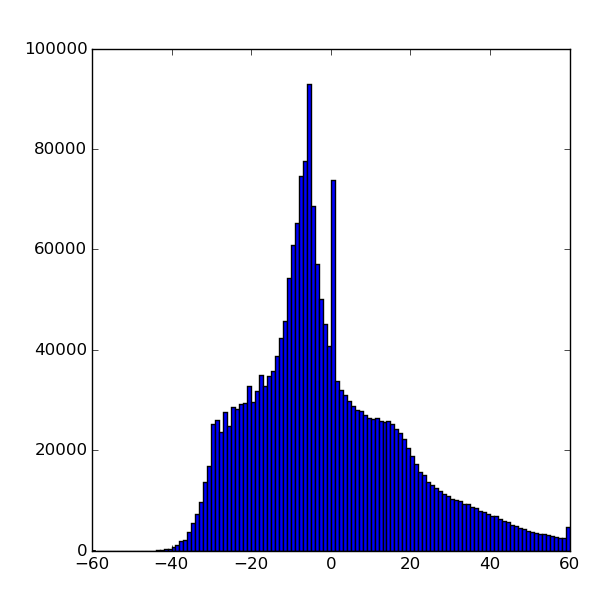

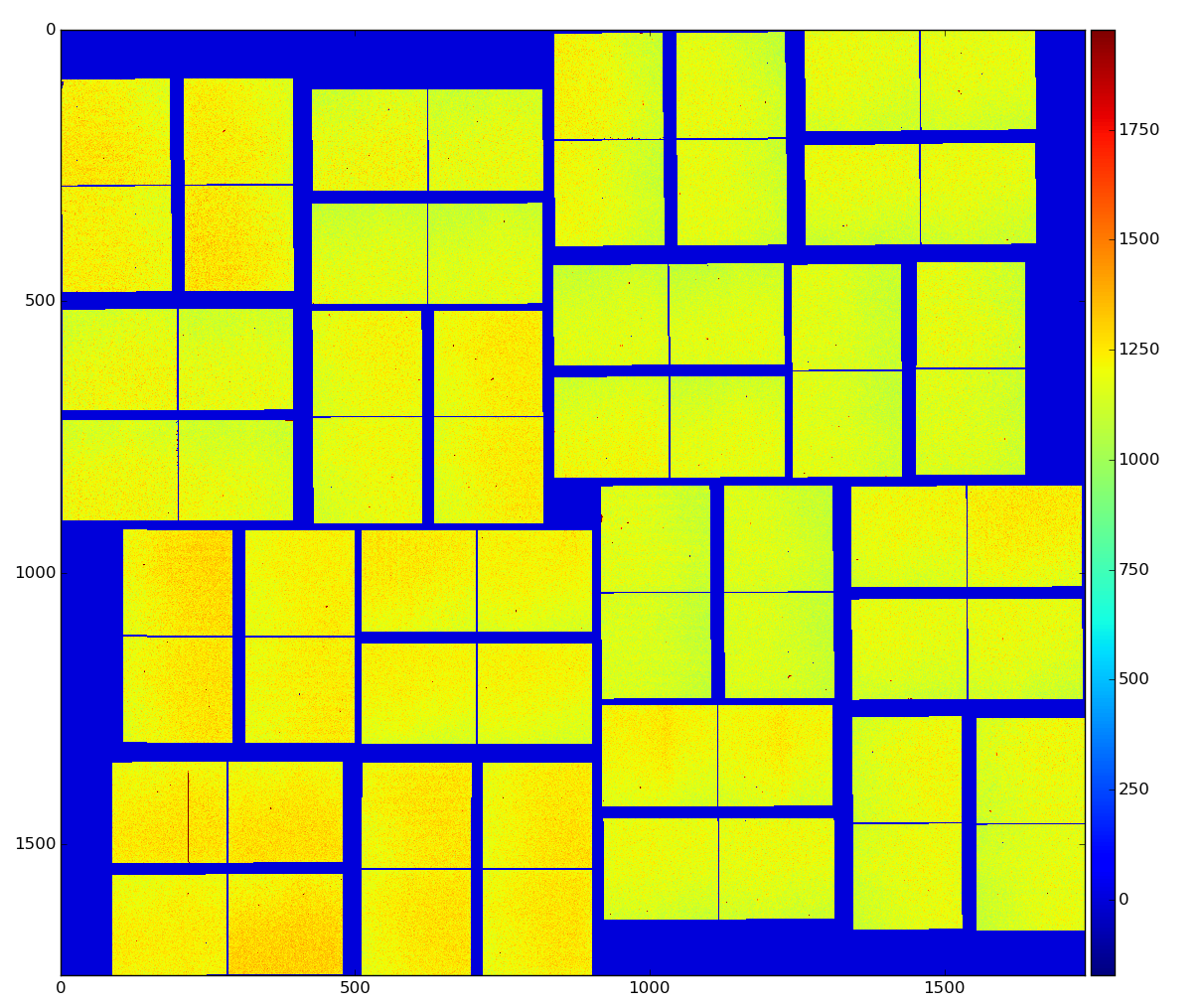

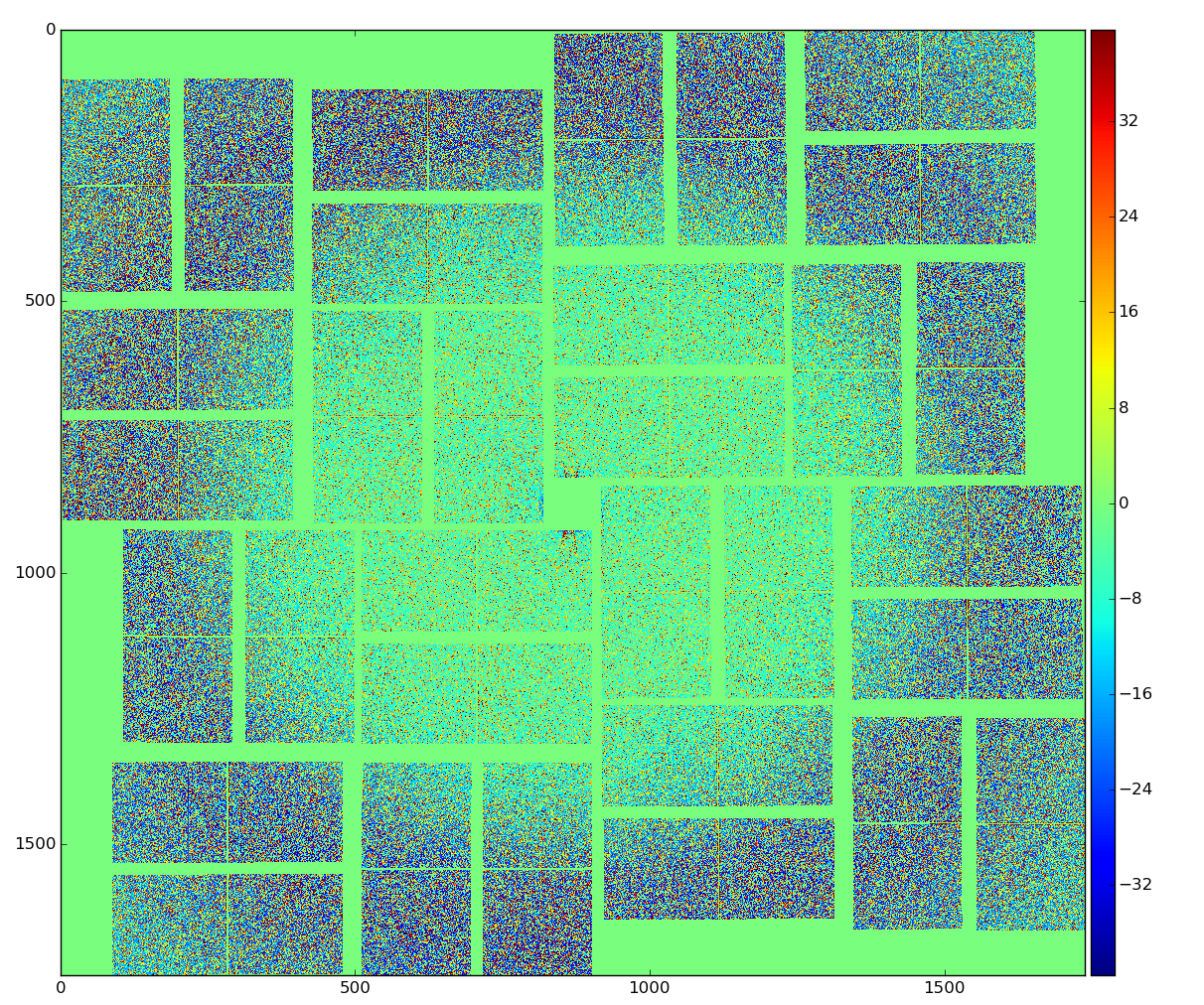

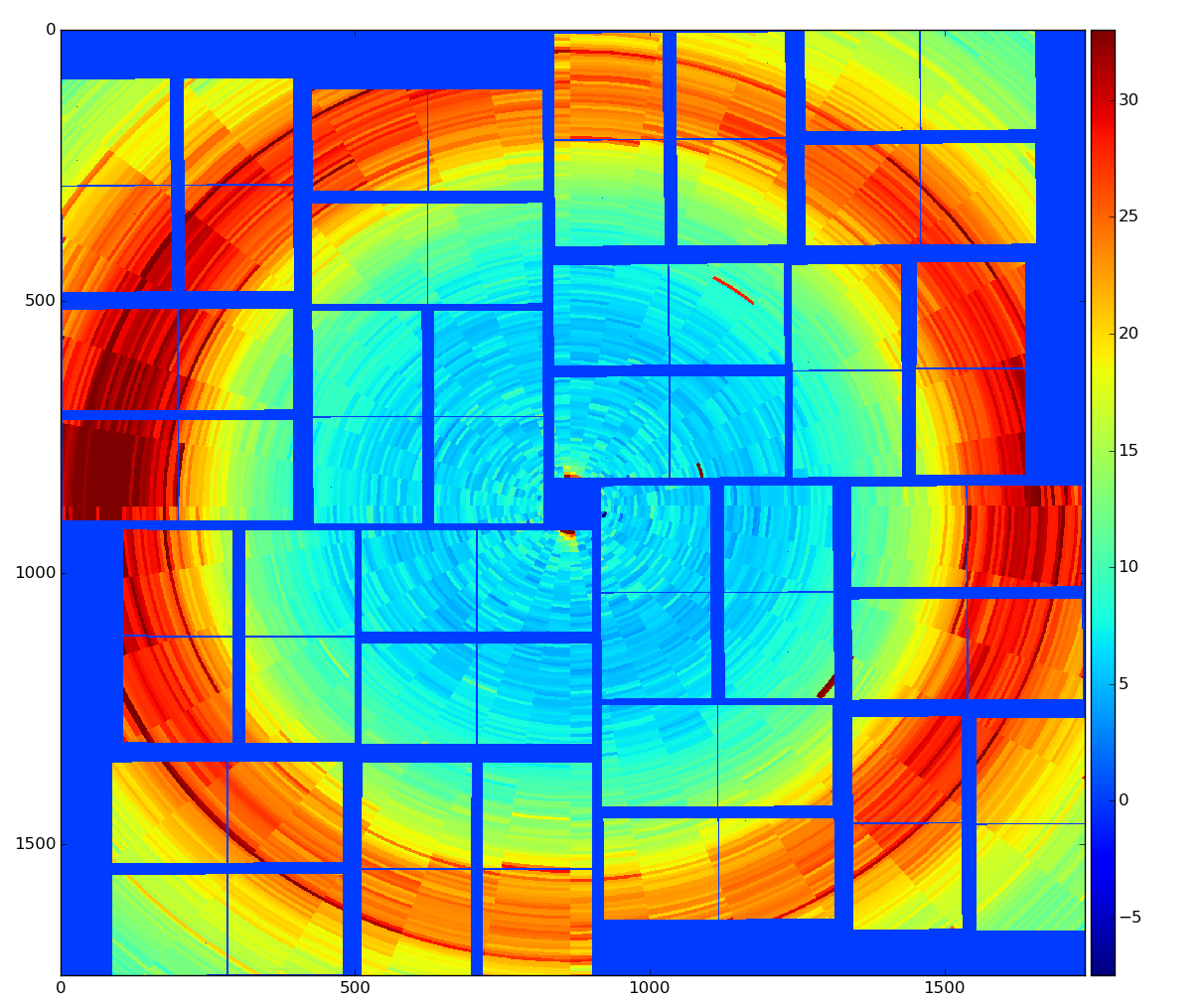

Calibrated and radial-background subtracted data

binned background shape:

binned background shape:

Array entropy H(16-bit) = 6.280 H(8-bit) = 4.487 H(cpo) = 6.280

zlib level=0: data size (bytes) in/out = 4593957/4594663 = 1.000 time(sec)=0.035164 t(decomp)=0.007174

zlib level=1: data size (bytes) in/out = 4593957/2322746 = 1.978 time(sec)=0.137170 t(decomp)=0.019560

zlib level=2: data size (bytes) in/out = 4593957/2310816 = 1.988 time(sec)=0.090709 t(decomp)=0.019657

zlib level=3: data size (bytes) in/out = 4593957/2270123 = 2.024 time(sec)=0.137816 t(decomp)=0.023169

zlib level=4: data size (bytes) in/out = 4593957/2257567 = 2.035 time(sec)=0.113220 t(decomp)=0.027111

zlib level=5: data size (bytes) in/out = 4593957/2323615 = 1.977 time(sec)=0.345213 t(decomp)=0.022773

zlib level=6: data size (bytes) in/out = 4593957/2312382 = 1.987 time(sec)=0.708425 t(decomp)=0.022472

zlib level=7: data size (bytes) in/out = 4593957/2307002 = 1.991 time(sec)=0.935245 t(decomp)=0.023992

zlib level=8: data size (bytes) in/out = 4593957/2304653 = 1.993 time(sec)=1.201955 t(decomp)=0.022417

zlib level=9: data size (bytes) in/out = 4593957/2304574 = 1.993 time(sec)=1.215707 t(decomp)=0.022277

Entropy of low and high bytes

load_nda_from_file:

Data from file nda-cxitut13-r0010-e000011-raw.npy: shape:(32, 185, 388) size:2296960 dtype:int16 [1028 1082 1101 1072 1131]...

H(8-bit) = 5.080

nda8 : shape:(2296960, 2) size:4593920 dtype:uint8 [ 4 4 58 4 77 4 48 4 107 4]...

# split data array for two with even and odd bytes:

nda8L: shape:(2296960,) size:2296960 dtype:uint8 [ 4 58 77 48 107 73 45 103 28 89]...

nda8H: shape:(2296960,) size:2296960 dtype:uint8 [4 4 4 4 4 4 4 4 4 4]...

H(low -byte) = 7.821

H(high-byte) = 0.376

SZIP

"SZIP is a patented compression technology used extensively by NASA. Generally you only have to worry about this if you’re exchanging files with people who use satellite data. Because of patent licensing restrictions, many installations of HDF5 have the compressor (but not the decompressor) disabled."

dset= myfile.create_dataset("Dataset3", (1000,), compression="szip")

SZIP features:

- Integer (1, 2, 4, 8 byte; signed/unsigned) and floating-point (4/8 byte) types only

- Fast compression and decompression

- A decompressor that is almost always available

LZF

"For files you’ll only be using from Python, LZF is a good choice. It ships with h5py; C source code is available for third-party programs under the BSD license. It’s optimized for very, very fast compression at the expense of a lower compression ratio compared to GZIP. The best use case for this is if your dataset has large numbers of redundant data points."

dset = myfile.create_dataset("Dataset4", (1000,), compression="lzf")

LZF features:

- Works with all HDF5 types

- Fast compression and decompression

- Is only available in Python (ships with h5py); C source available

References