Page History

...

NOTE: check for A and B cable swaps as described above using the remote link id's shown in hsdpva and kcuStatus.

Firmware upgrade from JTAG to PCIE

Install firmware newer than "hsd_6400m-0x05000100-20240424152429-weaver-b701acb.mcs".

Install datadev.ko:

- login to daq-tmo-hsd-01 or 02 In hsd-02 one needs to re-mount the filesystem as rw to make any modifications:

> sudo mount -o remount,rw / > git clone git@github.com:slaclab/aes-stream-drivers(we are at tag 6.0.1 last time we installed)> cd aes-stream-drivers/data_dev/driver> make- > sudo cp datadev.ko /usr/local/sbin/

- Create a /lib/systemd/system/kcu.service

- > sudo systemctl enable kcu.service

- > sudo systemctl start kcu.service

Modify hsd.cnf:

procmgr_config.append({host:peppex_node, id:'hsdioc_tmo_{:}'.format(peppex_hsd), port:'%d'%iport, flags:'s', env:hsd_epics_env, cmd:'hsd134PVs -P {:}_{:} -d /dev/datadev_{:}'.format(peppex_epics,peppex_hsd.upper(),peppex_hsd)})

iport += 1

So that it points to /dev/datadev/

Run :

> procmgr stopall hsd.cnf

and restart it all

> procmgr start hsd.cnf

These last 2 steps may be required to be repeated a couple of times.

Start the DAQ and send a configure. Also this step may be required to be repeated a couple of times.

Fiber Optic Powers

You can see optical powers on the kcu1500 with the pykcu command (and pvget), although see below for examples of problems so I have the impression this isn't reliable. See the timing-system section for an example of how to run pykcu. On the hsd's themselves it's not possible because the FPGA (on the hsd pcie carrier card) doesn't have access to the i2c bus (on the data card). Matt says that In principle the hsd card can see the optical power from the timing system, but that may require firmware changes.

...

From Chris Ford. See also ConfigDB and DAQ configdb CLI Notes. Supports ls/cat/cp. NOTE: when copying across hutches it's important to specify the user for the destination hutch. For example:across hutches it's important to specify the user for the destination hutch. For example:

| Code Block |

|---|

configdb cp --user rixopr --password <usual> --write tmo/BEAM/tmo_atmopal_0 rix/BEAM/atmopal_0 |

Storing (and Updating) Database Records with <det>_config_store.py

Detector records can be defined and then stored in the database using the <det>_config_store.py scripts. For a minimal model see the hrencoder_config_store.py which has just a couple additional entries beyond the defaults (which should be stored for every detector).

Once the record has been defined in the script, the script can be run with a few command-line arguments to store it in the database.

The following call should be appropriate for most use cases.

| Code Block |

|---|

python <det>_config_store.py --name <unique_detector_name> [--segm 0] --inst <hutch> |

| Code Block |

configdb cp --user rixopr<usropr> --password <usual> [--write tmo/BEAM/tmo_atmopal_0 rix/BEAM/atmopal_0 |

...

prod] --alias BEAM |

Verify the following:

- User and hutch (

--inst) as defined in the cnf file for the specific configuration and include as--user <usr>. This is used for HTTP authentication. E.g.,tmoopr,tstopr - Password is the standard password used for HTTP authentication.

- Include

--prodif using the production database. This will need to match the entry in yourcnffile as well, defined ascdb.https://pswww.slac.stanford.edu/ws-auth/configdb/wsis the production database. - Do not include the segment number in the detector name. If you are updating an entry for a segment other than 0, pass

–sgem $SEGMin addition to–name $DETNAME

python <det>_config_store.py

...

--help is available and will display all arguments.

There are similar scripts that can be used to update entries. E.g.:

hsd_config_update.py

Making Schema Updates in configdb

i.e. changing the structure of an object while keeping existing values the same. An example from Ric: "Look at the __main__ part of epixm320Detector records can be defined and then stored in the database using the <det>_config_store.py scripts. For a minimal model see the hrencoder_config_store.py which has just a couple additional entries beyond the defaults (which should be stored for every detector).

Once the record has been defined in the script, the script can be run with a few command-line arguments to store it in the database.

The following call should be appropriate for most use cases.

| Code Block |

|---|

python <det>_config_store.py --name <unique_detector_name> [--segm 0] --inst <hutch> --user <usropr> --password <usual> [--prod] --alias BEAM |

Verify the following:

- User and hutch (

--inst) as defined in the cnf file for the specific configuration and include as--user <usr>. This is used for HTTP authentication. E.g.,tmoopr,tstopr - Password is the standard password used for HTTP authentication.

- Include

--prodif using the production database. This will need to match the entry in yourcnffile as well, defined ascdb.https://pswww.slac.stanford.edu/ws-auth/configdb/wsis the production database. - Do not include the segment number in the detector name. If you are updating an entry for a segment other than 0, pass

–sgem $SEGMin addition to–name $DETNAME

python <det>_config_store.py --help is available and will display all arguments.

There are similar scripts that can be used to update entries. E.g.:

hsd_config_update.py

Making Schema Updates in configdb

i.e. changing the structure of an object while keeping existing values the same. An example from Ric: "Look at the __main__ part of epixm320_config_store.py. Then run it with --update. You don’t need the bit regarding args.dir. That’s for uploading .yml files into the configdb."

Here's an example from Matt: python /cds/home/opr/rixopr/git/lcls2_041224/psdaq/psdaq/configdb/hsd_config_update.py --prod --inst tmo --alias BEAM --name hsd --segm 0 --user tmoopr --password XXXX

MCC Epics Archiver Access

Matt gave us a video tutorial on how to access the MCC epics archiver.

| View file | ||||

|---|---|---|---|---|

|

TEB/MEB

(conversation with Ric on 06/16/20 on TEB grafana page)

BypCt: number bypassing the TEB

BtWtg: boolean saying whether we're waiting to allocate a batch

TxPdg (MEB, TEB, DRP): boolean. libfabric saying try again to send to the designated destination (meb, teb, drp)

RxPdg (MEB, TEB, DRP): same as above but for Rx.

(T(eb)M(eb))CtbOInFlt: incremented on a send, decremented on a receive (hence "in flight")

In tables at the bottom: ToEvtCnt is number of events timed out by teb

WrtCnt MonCnt PsclCnt: the trigger decisions

TOEvtCnt TIEvtCnt: O is outbound from drp to teb, I is inbound from teb to drp

Look in teb log file for timeout messages. To get contributor id look for messages like this in drp:

/reg/neh/home/cpo/2020/06/16_18:19:24_drp-tst-dev010:tmohsd_0.log:Parameters of Contributor ID 8:

Conversation from Ric and Valerio on the opal file-writing problem (11/30/2020):

. Then run it with --update. You don’t need the bit regarding args.dir. That’s for uploading .yml files into the configdb."

Here's an example from Matt: python /cds/home/opr/rixopr/git/lcls2_041224/psdaq/psdaq/configdb/hsd_config_update.py --prod --inst tmo --alias BEAM --name hsd --segm 0 --user tmoopr --password XXXX

MCC Epics Archiver Access

Matt gave us a video tutorial on how to access the MCC epics archiver.

| View file | ||||

|---|---|---|---|---|

|

TEB/MEB

(conversation with Ric on 06/16/20 on TEB grafana page)

BypCt: number bypassing the TEB

BtWtg: boolean saying whether we're waiting to allocate a batch

TxPdg (MEB, TEB, DRP): boolean. libfabric saying try again to send to the designated destination (meb, teb, drp)

RxPdg (MEB, TEB, DRP): same as above but for Rx.

(T(eb)M(eb))CtbOInFlt: incremented on a send, decremented on a receive (hence "in flight")

In tables at the bottom: ToEvtCnt is number of events timed out by teb

WrtCnt MonCnt PsclCnt: the trigger decisions

TOEvtCnt TIEvtCnt: O is outbound from drp to teb, I is inbound from teb to drp

Look in teb log file for timeout messages. To get contributor id look for messages like this in drp:

/reg/neh/home/cpo/2020/06/16_18:19:24_drp-tst-dev010:tmohsd_0.log:Parameters of Contributor ID 8:

Conversation from Ric and Valerio on the opal file-writing problem (11/30/2020):

I was poking around with daqPipes just to familiarize myself with it and I was looking at the crash this morning at around 8.30. I noticed that at 8.25.00 the opal queue is at 100% nd teb0 is starting to give bad signs (again at ID0, from the bit mask) However, if I make steps of 1 second, I see that it seems to recover, with the queue occupancy dropping to 98, 73 then 0. However, a few seconds later the drp batch pool for all the hsd lists are blocked. I would like to ask you (answer when you have time, it is just for me to understand): is this the usual Opal problem that we see? Why does it seem to recover before the batch pool blocks? I see that the first batch pool to be exhausted is the opal one. Is this somehow related?

- I’ve still been trying to understand that one myself, but keep getting interrupted to work on something else, so here is my perhaps half baked thought: Whatever the issue is that blocks the Opal from writing, eventually goes away and so it can drain. The problem is that that is so late that the TEB has started timing out a (many?) partially built event(s). Events for which there is no contributor don’t produce a result for the missing contributor, so if that contributor (sorry, DRP) tried to produce a contribution, it never gets an answer, which is needed to release the input batch and PGP DMA buffer. Then when the system unblocks, a SlowUpdate (perhaps, could be an L1A, too, I think) comes along with a timestamp so far in the future that it wraps around the batch pool, a ring buffer. This blocks because there might already be an older contribution there that is waiting to be released. It scrambles my brain to think about, so apologies if it isn’t clear. I’m trying to think of a more robust way to do it, but haven’t gotten very far yet.

- One possibility might be for the contributor/DRP to time out the input buffer in EbReceiver, so that if a result matching that input never arrives, the input buffer and PGP buffer are released. This could produce some really complicated failure modes that are hard to debug, because the system wouldn’t stop. Chris discouraged me from going down that path for fear of making things more complicated, rightly so, I think.

If a contribution is missing, the *EBs time it out (4 seconds, IIRR), then mark the event with DroppedContribution damage. The Result dgram (TEB only, and it contains the trigger decision) receives this damage and is sent to all contributors that the TEB heard from for that event. Sending it to contributors it didn’t hear from might cause problems because they might have crashed. Thus, if there’s damage raised by the TEB, it appears in all contributions that the DRPs write to disk and send to the monitoring. This is the way you can tell in the TEB Performance grafana plots whether the DRP or the TEB is raising the damage.

Ok thank you. But when you say: "If

I was poking around with daqPipes just to familiarize myself with it and I was looking at the crash this morning at around 8.30. I noticed that at 8.25.00 the opal queue is at 100% nd teb0 is starting to give bad signs (again at ID0, from the bit mask) However, if I make steps of 1 second, I see that it seems to recover, with the queue occupancy dropping to 98, 73 then 0. However, a few seconds later the drp batch pool for all the hsd lists are blocked. I would like to ask you (answer when you have time, it is just for me to understand): is this the usual Opal problem that we see? Why does it seem to recover before the batch pool blocks? I see that the first batch pool to be exhausted is the opal one. Is this somehow related?

- I’ve still been trying to understand that one myself, but keep getting interrupted to work on something else, so here is my perhaps half baked thought: Whatever the issue is that blocks the Opal from writing, eventually goes away and so it can drain. The problem is that that is so late that the TEB has started timing out a (many?) partially built event(s). Events for which there is no contributor don’t produce a result for the missing contributor, so if

that contributor (sorry, DRP) tried to produce a contribution, it never gets an answer, which is

needed to release the input batch and PGP DMA buffer. Then when the system unblocks, a SlowUpdate (perhaps, could be an L1A, too, I think) comes along with a timestamp so far in the future that it wraps around the batch pool, a ring buffer. This blocks because there might already be an older contribution there that is waiting to be released. It scrambles my brain to think about, so apologies if it isn’t clear. I’m trying to think of a more robust way to do it, but haven’t gotten very far yet. - One possibility might be for the contributor/DRP to time out the input buffer in EbReceiver, so that if a result matching that input never arrives, the input buffer and PGP buffer are released. This could produce some really complicated failure modes that are hard to debug, because the system wouldn’t stop. Chris discouraged me from going down that path for fear of making things more complicated, rightly so, I think.

If a contribution is missing, the *EBs time it out (4 seconds, IIRR), then mark the event with DroppedContribution damage. The Result dgram (TEB only, and it contains the trigger decision) receives this damage and is sent to all contributors that the TEB heard from for that event. Sending it to contributors it didn’t hear from might cause problems because they might have crashed. Thus, if there’s damage raised by the TEB, it appears in all contributions that the DRPs write to disk and send to the monitoring. This is the way you can tell in the TEB Performance grafana plots whether the DRP or the TEB is raising the damage.

Ok thank you. But when you say: "If that contributor (sorry, DRP) tried to produce a contribution, it never gets an answer, which is needed to release the input batch and PGP DMA buffer". I guess you mean that the DRP tries to collect a contribution for a contributor that maybe is not there. But why would the DRP try to do that? It should know about the damage from the TEB's trigger decision, right? (Do not feel compelled to answer immediately, when you have time)

The TEB continues to receive contributions even when there’s an incomplete event in its buffers. Thus, if an event or series of events is incomplete, but a subsequent event does show up as complete, all those earlier incomplete events are marked with DroppedContribution and flushed, with the assumption they will never complete. This happens before the timeout time expires. If the missing contributions then show up anyway (the assumption was wrong), they’re out of time order, and thus dropped on the floor (rather than starting a new event which will have the originally found contributors in it missing (Aaah, my fingers have form knots!), causing a split event (don’t know if that’s universal terminology)). A split event is often indicative of the timeout being too short.

- The problem is that the DRP’s Result thread, what we call EbReceiver, if that thread gets stuck, like in the Opal case, for long enough, it will backpressure into the TEB so that it hangs in trying to send some Result to the DRPs. (I think the half-bakedness of my idea is starting to show…) Meanwhile, the DRPs have continued to produce Input Dgrams and sent them to the TEB, until they ran out of batch buffer pool. That then backs up in the KCU to the point that the Deadtime signal is asserted. Given the different contribution sizes, some DRPs send more Inputs than others, I think. After long enough, the TEB’s timeout timer goes off, but because it’s paralyzed by being stuck in the Result send(), nothing happens (the TEB is single threaded) and the system comes to a halt. At long last, the Opal is released, which allows the TEB to complete sending its ancient Result, but then continues on to deal with all those timed out events. All those timed out events actually had Inputs which now start to flow, but because the Results for those events have already been sent to the contributors that produced their Inputs in time, the DRPs that produced their Inputs late never get to release their Input buffers.

A thought from Ric on how shmem buffer sizes are calculated:

- If the buffer for the Configure dgram (in /usr/lib/systemd/system/tdetsim.service) is specified to be larger, it wins. Otherwise it’s the Pebble buffer size. The Pebble buffer size is derived from the tdetsim.service cfgSize, unless it’s overridden by the pebbleBufSize kwarg to the drp executable

needed to release the input batch and PGP DMA buffer". I guess you mean that the DRP tries to collect a contribution for a contributor that maybe is not there. But why would the DRP try to do that? It should know about the damage from the TEB's trigger decision, right? (Do not feel compelled to answer immediately, when you have time)

The TEB continues to receive contributions even when there’s an incomplete event in its buffers. Thus, if an event or series of events is incomplete, but a subsequent event does show up as complete, all those earlier incomplete events are marked with DroppedContribution and flushed, with the assumption they will never complete. This happens before the timeout time expires. If the missing contributions then show up anyway (the assumption was wrong), they’re out of time order, and thus dropped on the floor (rather than starting a new event which will have the originally found contributors in it missing (Aaah, my fingers have form knots!), causing a split event (don’t know if that’s universal terminology)). A split event is often indicative of the timeout being too short.

- The problem is that the DRP’s Result thread, what we call EbReceiver, if that thread gets stuck, like in the Opal case, for long enough, it will backpressure into the TEB so that it hangs in trying to send some Result to the DRPs. (I think the half-bakedness of my idea is starting to show…) Meanwhile, the DRPs have continued to produce Input Dgrams and sent them to the TEB, until they ran out of batch buffer pool. That then backs up in the KCU to the point that the Deadtime signal is asserted. Given the different contribution sizes, some DRPs send more Inputs than others, I think. After long enough, the TEB’s timeout timer goes off, but because it’s paralyzed by being stuck in the Result send(), nothing happens (the TEB is single threaded) and the system comes to a halt. At long last, the Opal is released, which allows the TEB to complete sending its ancient Result, but then continues on to deal with all those timed out events. All those timed out events actually had Inputs which now start to flow, but because the Results for those events have already been sent to the contributors that produced their Inputs in time, the DRPs that produced their Inputs late never get to release their Input buffers.

A thought from Ric on how shmem buffer sizes are calculated:

- If the buffer for the Configure dgram (in /usr/lib/systemd/system/tdetsim.service) is specified to be larger, it wins. Otherwise it’s the Pebble buffer size. The Pebble buffer size is derived from the tdetsim.service cfgSize, unless it’s overridden by the pebbleBufSize kwarg to the drp executable

On 4/1/22 there was an unusual crash of the DAQ in SRCF. The system seemed to start up and run normally for a short while (according to grafana plots), but the AMI sources panel didn't fill in. Then many (all?) DRPs crashed (ProcStat Status window) due to not receiving a buffer in which to deposit their SlowUpdate contributions (log files). The ami-node_0 log file shows exceptions '*** Corrupt xtc: namesid 0x501 not found in NamesLookup' and similar messages. The issue was traced to there being 2 MEBs running on the same node. Both used the same 'tag' for the shared memory (the instrument name, 'tst'), which probably led to some internal confusion. Moving one of the MEBs to another node resolved the problem. Giving one of the MEBs a non-default tag (-t option) also solves the problem and allows both MEBs to run on the same node.

Pebble Buffer Count Error

Summary: if you see "One or more DRPs have pebble buffer count > the common RoG's" in the teb log file it means that the common readout group pebble buffers needs to be made the largest either by modifying the .service file or setting the pebbleBufCount kwarg. Note that only one of the detectors in the common RoG needs to have more buffers than the non-common RoG (see example that follows).

More detailed example/explanation from Ric:

case A works (detector with number of pebble buffers):

group0: timing (8192) piran (1M)

group3: bld (1M)

case B breaks:

group0: timing (8192)

group3: bld (1M) piran (1M)

teb needs buffers to put its answers on its own local AND on the drp teb sends back an index to all drp's that is identical even timing allocates space for 1M teb answers (learns through collection that piranha has 1M and also allocates the same)

workaround was to increase dma buffers in tdetsim.service, but this potentially wastes those, really only need pebble buffers which can be individually on the command line of the drp executable with a pebbleBufCount kwarg

note that the teb compares the SUM of tx/rx buffers, which is what the pebble buf count gets set to by default, unless overridden with pebbleBufCount kwargOn 4/1/22 there was an unusual crash of the DAQ in SRCF. The system seemed to start up and run normally for a short while (according to grafana plots), but the AMI sources panel didn't fill in. Then many (all?) DRPs crashed (ProcStat Status window) due to not receiving a buffer in which to deposit their SlowUpdate contributions (log files). The ami-node_0 log file shows exceptions '*** Corrupt xtc: namesid 0x501 not found in NamesLookup' and similar messages. The issue was traced to there being 2 MEBs running on the same node. Both used the same 'tag' for the shared memory (the instrument name, 'tst'), which probably led to some internal confusion. Moving one of the MEBs to another node resolved the problem. Giving one of the MEBs a non-default tag (-t option) also solves the problem and allows both MEBs to run on the same node.

BOS

See Matt's information: Calient S320 ("The BOS")

- Create cross-connections:

curl --cookie PHPSESSID=ue5gks2db6optrkputhhov6ae1 -X POST --header "Content-Type: application/json" --header "Accept: application/json" -d "{\"in\": \"1.1.1\",\"out\": \"6.1.1\",\"dir\": \"bi\",\"band\": \"O\"}" --user admin:pxc*** "http://osw-daq-calients320.pcdsn/rest/crossconnects/?id=add" - Activate cross-connections:

curl --cookie PHPSESSID=ue5gks2db6optrkputhhov6ae1 -X POST --header "Content-Type: application/json" --header "Accept: application/json" --user admin:pxc*** "http://osw-daq-calients320.pcdsn/rest/crossconnects/?id=activate&conn=1.1.1-6.1.1&group=SYSTEM&name=1.1.1-6.1.1"- This doesn't seem to work: Reports '

411 - Length Required'

Use the web GUI for now

- This doesn't seem to work: Reports '

- List cross-connections (easier to read in the web GUI):

curl --cookie PHPSESSID=ue5gks2db6optrkputhhov6ae1 -X GET --user admin:pxc*** 'http://osw-daq-calients320.pcdsn/rest/crossconnects/?id=list' - Save cross-connections to a file:

- Go to http://osw-daq-calients320.pcdsn/

- Navigate to

Ports→Summary - Click on '

Export CSV' in the upper left of the Port Summary table - Check in the resulting file as

lcls2/psdaq/psdaq/cnf/BOS-PortSummary.csv

BOS Connection CLI

Courtesy of Ric Claus. NOTE the dashes in the "delete" since you are deleting a connection name. In the "add" the two ports are joined together with a dash to create the connection name, so order of the ports matters.

| Code Block |

|---|

bos delete --deactivate 1.1.7-5.1.2

bos delete --deactivate 1.3.6-5.4.8

bos add --activate 1.3.6 5.1.2

bos add --activate 1.1.7 5.4.8 |

XPM

Link qualification

Looking at the eye diagram or bathtub curves gives really good image of the high speed links quality on the Rx side. The pyxpm_eyediagram.py tool provides a way to generate these plots for the SFPs while the XPM is receiving real data.

...

Larry thinks that these are in the raw units read out from the device (mW) and says that to convert to dBm use the following formula: 10*log(10)(val/1mW). For example, 0.6 corresponds to -2.2dBm. The same information is now displayed with xpmpva in the "SFPs" tab.

| Code Block |

|---|

(ps-4.1.2) tmo-daq:scripts> pvget DAQ:NEH:XPM:0:SFPSTATUS

DAQ:NEH:XPM:0:SFPSTATUS 2021-01-13 14:36:15.450

LossOfSignal ModuleAbsent TxPower RxPower

0 0 6.5535 6.5535

1 0 0.5701 0.0001

0 0 0.5883 0.7572

0 0 0.5746 0.5679

0 0 0.8134 0.738

0 0 0.6844 0.88

0 0 0.5942 0.4925

0 0 0.5218 0.7779

1 0 0.608 0.0001

0 0 0.5419 0.3033

1 0 0.6652 0.0001

0 0 0.5177 0.8751

1 1 0 0

0 0 0.7723 0.201 |

Programming Firmware

From Matt. He says the current production version (which still suffers from xpm-link-glitch storms) is 0x030504. The git repo with firmware is here:

https://github.com/slaclab/l2si-xpm

Please remember to stop the pyxpm process associated with the xpm before proceeding.

Connect to tmo-daq as tmoopr and use procmgr stop neh_-base.cnf pyxpm-xx.

| Code Block |

|---|

ssh drp-neh-ctl01. (with ethernet access to ATCA switch: or drp-srcf-mon001 for production hutches) ~weaver/FirmwareLoader/rhel6/FirmwareLoader -a <XPM_IPADDR> <MCS_FILE>. (binary copied from afs) ssh psdev source /cds/sw/package/IPMC/env.sh fru_deactivate shm-fee-daq01/<SLOT> fru_activate shm-fee-daq01/<SLOT> The MCS_FILE can be found at: /cds/home/w/weaver/mcs/xpm/xpm-0x03060000-20231009210826-weaver-a0031eb.mcs /cds/home/w/weaver/mcs/xpm/xpm_noRTM-0x03060000-20231010072209-weaver-a0031eb.mcs |

Incorrect Fiducial Rates

In Jan. 2023 Matt saw a failure mode where xpmpva showed 2kHz fiducial rate instead of the expected 930kHz. This was traced to an upstream accelerator timing distribution module being uninitialized.

In April 2023, DAQs run on SRCF machines had 'PGPReader: Jump in complete l1Count' errors. Matt found XPM:0 receiving 929kHz of fiducials but only transmitting 22.5kHz, which he thought was due to CRC errors on its input. Also XPM:0's FbClk seemed frozen. Matt said:

I could see the outbound fiducials were 22.5kHz by clicking one of the outbound ports LinkLoopback on. The received rate on that outbound link is then the outbound fiducial rate.

At least now we know this error state is somewhere within the XPM and not upstream.

The issue was cleared up by resetting XPM:0 with fru_deactivate/activate to clear up a bad state.

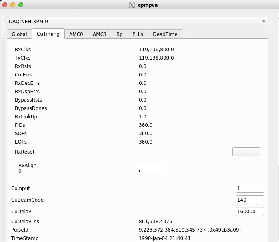

Note that when the XPMs are in a good state, the following values should be seen:

- Global tab:

- RecClk: 185 MHz

- FbClk: 185 MHz

- UsTiming tab:

- RxClks: 185 MHz

- RxLinkUp: 1

- CrcErrs: 0

- RxDecErrs: 0

- RxDspErrs: 0

- FIDs: 929 kHz

- SOFs: 929 kHz

- EOFs: 929 kHz

No RxRcv/RxErr Frames in xpmpva

If RxRcv/RxErr frames are stuck in xpmpva it may be that the network interface to the ATCA crate is not set up for jumbo frames.

Link Issues

If XPM links don't lock, here are some past causes:

- check that transceivers (especially QSFP, which can be difficult) are fully plugged in.

- for opal detectors:

- use devGui to toggle between xpmmini/LCLS2 timing (Matt has added this to the opal config script, but to the part that executes at startup time)

- hit TxPhyReset in the devGui (this is now done in the opal drp executable)

- if timing frames are stuck in a camlink node hitting TxPhyPllReset started the timing frame counters going (and it lighter-weight than xpmmini→lcls2 timing toggle)

- on a TDet node found "kcusim -T" (reset timing PLL) made a link lock

- for timing system detectors: run "kcuSim -s -d /dev/datadev_1", this should also be done when one runs a drp process on the drp node (to initialize the timing registers). the drp executable in this case doesn't need any transitions.

- hit Tx/Rx reset on xpmpva gui (AMC tabs).

- use loopback fibers (or click a loopback checkbox in xpmpva) to determine which side has the problem

- try swapping fibers in the BOS to see if the problem is on the xpm side or the kcu side

- we saw once where we have to power cycle a camlink drp node to make the xpm timing link lock. Matt suggests that perhaps hitting PLL resets in the rogue gui could be a more delicate way of doing this.

- (old information with the old/broken BOS) Valerio and Matt had noticed that the BOS sometimes lets its connections deteriorate. To fix:

- ssh root@osw-daq-calients320

- omm-ctrl --reset

Timing Frames Not Properly Received

- do TXreset on appropriate port

- toggling between xpmmini and lcls2 timing can fix (we have put this in the code now, previously was lcls1-to-lcls2 timing toggle in the code)

- sometimes xpm's have become confused and think they are receiving 26MHz timing frames when they should be 0.9MHz (this can be seen in the upstream-timing tab of xpmpva ("UsTiming"). you can determine which xpm is responsible by putting each link in loopback mode: if it is working properly you should see 0.9MHz of rx frames in loopback mode (normally 20MHz of frames in normal mode). Proceed upstream until you find a working xpm, then do tx resets (and rx?) downstream to fix them,

Network Connection Difficulty

Saw this error on Nov. 2 2021 in lab3 over and over:

| Code Block |

|---|

WARNING:pyrogue.Device.UdpRssiPack.rudpReg:host=10.0.2.102, port=8193 -> Establishing link ... |

Matt writes:

That error could mean that some other pyxpm process is connected to it. Using ping should show if the device is really off the network, which seems to be the case. You can also use "amcc_dump_bsi --all shm-tst-lab2-atca02" to see the status of the ATCA boards from the shelf manager's view. (source /afs/slac/g/reseng/IPMC/env.sh[csh] or source /cds/sw/package/IPMC/env.sh[csh]) It looks like the boards in slots 2 and 4 had lost ethernet connectivity (with the ATCA switch) but should be good now. None of the boards respond to ping, so I'm guessing its the ATCA switch that's failed. The power on that board can also be cycled with "fru_deactivate, fru_activate". I did that, and now they all respond to ping.

Firmware Varieties and Switching Between Internal/External Timing

NOTE: these instructions only apply for XPM boards running "xtpg" firmware. This is the only version that supports internal timing for the official XPM boards. It has a software-selectable internal/external timing using the "CuInput" variable. KCU1500's running the xpm firmware have a different image for internal timing with "Gen" in the name (see /cds/home/w/weaver/mcs/xpm/*Gen*, which currently contains only a KCU1500 internal-timing version).

If the xpm board is in external mode in the database we believe we have to reinitialize the database by running:

python pyxpm_db.py --inst tmo --name DAQ:NEH:XPM:10 --prod --user tmoopr --password pcds --alias XPM

CuInput flag (DAQ:NEH:XPM:0:XTPG:CuInput) is set to 1 (for internal timing) instead of 0 (external timing with first RTM SFP input, presumably labelled "EVR[0]" on the RTM, but we are not certain) or 3 (second RTM SFP timing input labelled "EVR[1]" on the RTM).

Matt says there are three types of XPM firmware: (1) an XTPG version which requires an RTM input (2) a standard XPM version which requires RTM input (3) a version which gets its timing input from AMC0 port 0 (with "noRTM" in the name). The xtpg version can take lcls1 input timing and convert to lcls2 or can generate internal lcls2 timing. Now that we have switched the tmo/rix systems to lcls2 timing this version is not needed anymore: the "xpm" firmware version should be used. The one exception is the detector group running in MFX from LCLS1 timing which currently uses xpm7 running xtpg firmware.

This file puts xpm-0 in internal timing mode: https://github.com/slac-lcls/lcls2/blob/master/psdaq/psdaq/cnf/internal-neh-base.cnf. Note that in internal timing mode the L0Delay (per-readout-group) seems to default to 90. Fix it with pvput DAQ:NEH:XPM:0:PART:0:L0Delay 80".

One should switch back to external mode by setting CuInput to 0 in xpmpva CuTiming tab. Still want to switch to external-timing cnf file after this is done. Check that the FiducialErr box is not checked (try ClearErr to see if it fixes). If this doesn't clear it can be a sign that ACR has put it "wrong divisor" on their end.

...