Useful links

Technical

- SDF guide and documentation, particularly on using Jupyter notebooks interactively or through web interface (runs on top of nodes managed by SLURM)

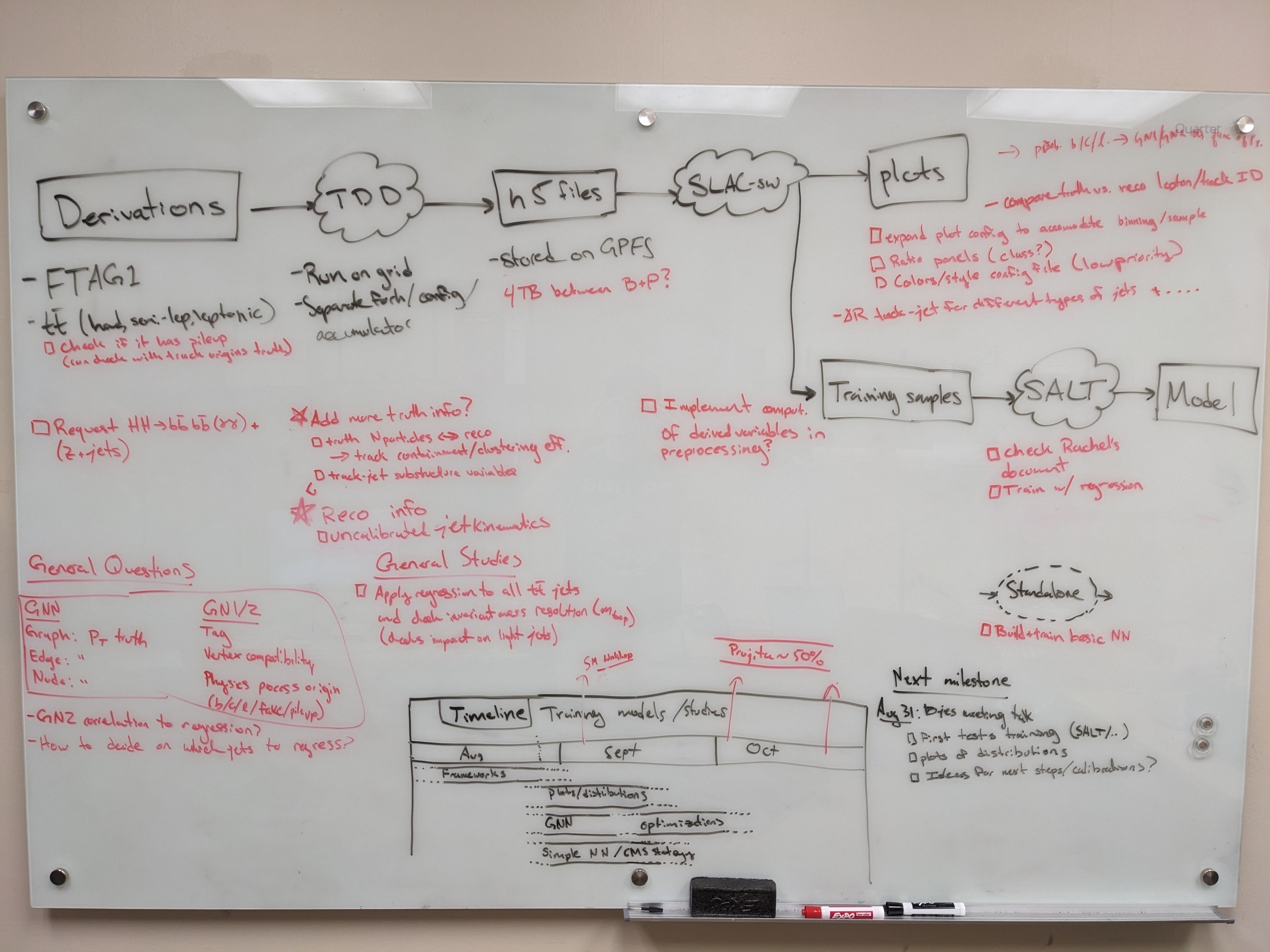

- Training dataset dumper (used for producing h5 files from FTAG derivations) documentation and git (Prajita's fork, bjet_regression is the main branch)

- SALT documentation, SALT on SDF, puma git repo (used for plotting), and Umami docs (for postprocessing), also umami-preprocessing (UPP)

- SLAC GitLab group for plotting related code

- FTAG1 derivation definition (FTAG1.py)

...

- See all B-jet calibration meetings on Indico

- Framework experience (Prajita, July 6)

- Plans (Prajita, July 13)

- What needs to be added to JETM2 (August 17)

Planning

SDF preliminaries

Compute resources

SDF has a shared queue for submitting jobs via slurm, but this partition has extremely low priority. Instead, use the usatlas partition or request Michael Kagan to join the atlas partition.

...

| Code Block |

|---|

export CONDA_PREFIX=/sdf/group/atlas/sw/conda

export PATH=${CONDA_PREFIX}/bin/:$PATH

source ${CONDA_PREFIX}/etc/profile.d/conda.sh

conda env list

conda activate bjr_v01 |

Producing H5 samples

We are using a custom fork of training-dataset-dumper, developed for producing h5 files for NN training based on FTAG derivations.

The custom fork is modified to store the truth jet pT via AntiKt4TruthDressedWZJets container.

...

| Code Block |

|---|

/gpfs/slac/atlas/fs1/d/pbhattar/BjetRegression/Input_Ftag_Ntuples ├── Rel22_ttbar_AllHadronic ├── Rel22_ttbar_DiLep └── Rel22_ttbar_SingleLep |

...

Plotting with Umami

...

/Puma

Plotting with umami

Umami (which relies on puma internally) is capable of producing plots based on yaml configuration files.

SALT likes to take preprocesses data file formats from Umami. The best (read: only) way to use umami out of the box is via a docker container. To configure on SDF following the docs, add the following to your .bashrc:

| Code Block |

|---|

export SDIR=/scratch/${USER}/.singularity

mkdir $SDIR -p

export SINGULARITY_LOCALCACHEDIR=$SDIR

export SINGULARITY_CACHEDIR=$SDIR

export SINGULARITY_TMPDIR=$SDIR |

Since cvmfs is mounted on SDF, use the latest tagged version singularity container:

To install umami, clone the repository (which is forked in the slac-bjr project):git clone ssh://git@gitlab.cern.ch:7999/slac-bjr/umami.git

Now setup umami and run the singularity image:

| Code Block |

|---|

source run_setup.sh

|

| Code Block |

singularity shell /cvmfs/unpacked.cern.ch/gitlab-registry.cern.ch/atlas-flavor-tagging-tools/algorithms/umami/umamibase-plus:0-20 |

There is no need to clone the umami repo unless you want to edit the code.

19 |

Plotting exercises can be followed in the umami tutorial.

Plotting with puma standalone

Puma can be used to produce plots in a more manual way. To install, there was some difficulty following the nominal instructions in the docs. What I (Brendon) found to work was to do:

| Code Block |

|---|

conda create -n puma python=3.11

pip install puma-hep

pip install atlas-ftag-tools |

This took quite some time to run, so (again) save yourself the effort and use the precompiled environments.

Preprocessing

SALT likes to take preprocesses data file formats from Umami (though in principle the format is the same as what's produced by the training dataset dumper).Preprocessing

An alternative (and supposedly better way to go) than using umami for preprocessing is to use the standalone umami-preprocessing (UPP).

Follow the installation instructions on git. This is unfortunately hosted on GitHub, so we cannot add it to the bjr-slac GitLab group

You can cut to the chase by doing conda activate upp, or follow the docs for straightforward installation.

Notes on logic of the preprocessing config files

There are several aspects of the preprocessing config that are not documented (yet). Most things are self explanatory, but note that:

- within the FTag software,

...

- there exist flavor classifications (for example lquarkjets, bjets, cjets, taujets, etc). These can be used to define different sample components. Further selections

based on kinematic cuts can be made through theregionkey. - It appears only 2 features can be specified for the resampling (nominally pt and eta).

- The

...

- binning also appears not to be respected for the variables used in resampling.

...

...

Model development with SALT

The slac-bjr git project contains a fork of SALT. One can follow the SALT documentation for general installation/usage. Some specific notes can be found below:

Creating conda environment:

One can use the environment salt that has been setup in /gpfs/slac/atlas/fs1/d/bbullard/conda_envs,

otherwise you may need to use conda install -c conda-forge jsonnet h5utils. Note that this was built using the latest master on Aug 28, 2023.

...

To solve it, you can do the following (again, this is already available in the common salt environment):

| Code Block |

|---|

conda install chardet conda install --force-reinstall -c conda-forge charset-normalizer=3.2.0 |

TestingSALTInteractive testing: it is ideal suggested not to use interactive nodes to do training, but if this is needed for some validation you can use salt fit --config configs/GNX-bjr.yaml --trainer.fast_dev_run 2. If you get to the point where you see an

error that memory cannot be allocated, you at least vetted against other errors.

Analyzing H5 samples

Notebooks

...

instead to open a terminal on an SDF node by starting a Jupyter notebook and going to New > Terminal. From here, you can test your training configuration.

Be aware that running a terminal on an SDF node will reduce your priority when submitting jobs to slurm.

Note that you must be careful about the number of workers you select (in the PyTorch trainer object) which should be <= the number of CPU cores you're using (using more CPU cores parallelizes the data loading,

which can be the primary bottleneck in training). The number of requested GPUs should match the number of devices used in the training.

Miscellaneous tips

You can grant read/write access for GPFS data folder directories to ATLAS group members via the following (note that this does not work for SDF home folder)

...