...

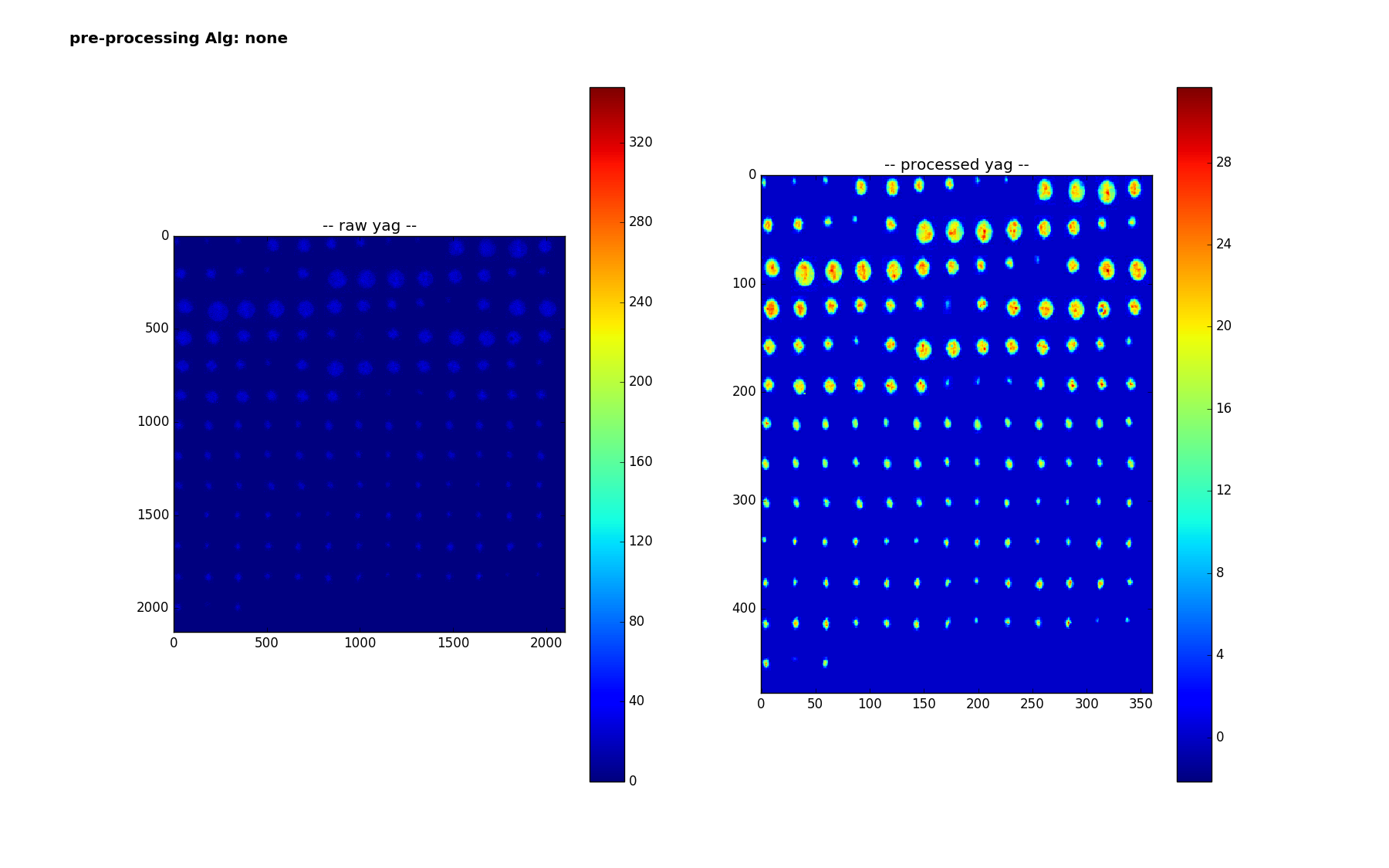

Here are the 159 smaples of the yag with a box - here are are using 'lanczos' to reduce from the much larger size of 1040 x 1392 to (224,224). It is interesting to note how the colorbar changes - the range no longer goes up to 320 - I think the 320 values were isolated pixels that get washed out? Or else there is something else I don't understand - we are doing nothing more than scipy.misc.imresize(img,(224,224), interp='lanczos',mode='F') but img is np.uint16 after careful background subtraction - (going through float32, thresholding at 0 before converting back)

more stuff

Pipeline

Regression/box prediction

The processing pipeline for the regression

- choose a preprocessing algorithm

- create vgg16 codeword1 and 2 (8196 numbers, last two layers)

- separately for 'yag' and nm'

- for each of the for each of the 163 (or 159) samples,

- train a linear regression classifier on the remaining 162 (or 158) samples

- map from 8196 variables to 4

- use it to predict a box for the ommitted sample

- optionally - limit input features - reject features with variance less than threshold

- (maybe they are noisy and throwing off classifier)

- train a linear regression classifier on the remaining 162 (or 158) samples

- for each of the for each of the 163 (or 159) samples,

Measure accuracy

For localization - on a shot by shot basis, where we are comparing boxA to boxB, one typically calculated the ratio of area of the intersection to area of the union. For imagenet competitions, one gets success on a shot/image of inter/union >=0.5, those predictions look quite good! One can then come up with a overall accuracy based on the inter/union threshold. Below, we report on accuracy for different thresholds, .5, .2 and .01 - the latter is to see how accurate we are at getting any overlap.

To visualze the results, we make a similar plot to above, but plot the truth box in white, and the predicted box in red.

Results

Pre processing=None, files=1,2,4

For the vcc, accuracies, a 1% overlap is 55%

For the yag, a 1% overlap is 86%:

Results - no pre-processing

With no preprocessing,